April 2, 2026

The Link Building Playbook That Still Works in 2026: Earn Authority Without Paying for It

Link building has a reputation problem it does not entirely deserve. When most Australian marketing teams hear the term, they picture one of two things: the paid link schemes that Google has been penalising for years and that still circulate in the shadier corners of the SEO industry, or the outreach programmes that require significant labour that produce a handful of marginal links per month at a cost that makes the return on investment look questionable at best. Both of these pictures miss the methods that actually work in 2026, which share a common characteristic: they earn links as a byproduct of producing something valuable rather than acquiring them as a standalone purchase or extraction exercise. The distinction matters commercially because earned links are structurally different from purchased links. They tend to come from more relevant and more authoritative sources. They are more stable because they are not contingent on a payment being maintained. They are more likely to produce lasting ranking improvements because they represent genuine signals of editorial endorsement. And they are not a liability that sits waiting for the next Google spam update to detonate. This article covers the link earning methods that Australian businesses and their marketing teams can execute in 2026 to build domain authority through genuine editorial signals rather than purchases or schemes.

Social Listening Implementation: Setting Up Monitoring Systems for Australian Brands

Most Australian brands have some version of a social media presence, but far fewer have a system for genuinely listening to what is being said about them across that presence and beyond it. Social listening is not the same as checking your notifications or scanning your mentions. It is a structured approach to monitoring conversations across platforms, forums, news sites, and review channels to understand how your brand is perceived, what your competitors are doing, and where opportunities and threats are emerging before they become urgent. For brands operating in Australia, the combination of a relatively small but highly connected digital population and a media landscape where a single viral moment can travel fast makes social listening not just useful but strategically important. This article walks through how to implement a social listening system that works for Australian brands, which tools are worth considering, what to monitor, and how to turn raw data into decisions that actually improve your marketing and customer experience.

Smart Shopping vs Performance Max: Migration Strategy for Retailers

Smart Shopping campaigns were a comfortable fixture in the Google Ads accounts of Australian retailers for years, and then Google retired them. Performance Max arrived as the replacement and brought with it a fundamentally different way of running product advertising, one that gave Google's machine learning far more control and advertisers far less visibility into where their budget was actually going. For retailers who migrated early or were pushed across by Google's automated migration, the experience was mixed. Some saw strong results almost immediately, others watched their ROAS drop and struggled to understand why. The difference between those two outcomes almost always came down to how the migration was handled, what assets were provided, and how much conversion data the account had going in. This article covers what actually changed between Smart Shopping and Performance Max, the migration considerations that matter most for Australian retailers, and how to set up a Performance Max campaign that earns its budget rather than simply spending it.

YouTube TrueView for Action: Direct Response Video Advertising

Video advertising has spent years being treated as a brand awareness tool and not much else, but that perception is well behind where the platform actually sits today. YouTube TrueView for Action campaigns are built specifically to drive measurable responses from viewers, turning what was once a top of funnel channel into a genuine direct response engine. For Australian businesses that have avoided YouTube advertising because they could not see a clear line to conversions, this campaign type changes the equation entirely. When set up correctly with strong creative, precise audience targeting, and a clear call to action, these campaigns can generate leads, sales, and new registrations at a cost that competes directly with search and social. The mechanics are different from what most performance marketers are used to, and the creative requirements are more demanding, but the payoff for getting it right is substantial. This article covers how TrueView for Action works, what it takes to make a campaign perform, and where Australian marketers most commonly go wrong.

App Campaigns for Action: Mobile App Marketing Beyond Installation

Most businesses that invest in a mobile app celebrate when the installs start rolling in, but installs alone do not pay the bills. The real value of a mobile app sits further down the funnel where users are booking, purchasing, subscribing, and returning again and again. App Campaigns for Action are built specifically to reach that deeper behaviour, shifting the focus from acquisition metrics to the actions that actually matter to your bottom line. For Australian businesses operating in an increasingly mobile first environment, understanding how to market beyond the install is no longer optional. It is where competitive advantage is being won and lost. Whether you are running an ecommerce app, a service booking platform, a loyalty programme or a content subscription, the principles of action based app marketing apply directly to your goals. This article walks through how App Campaigns for Action work, why they outperform install only campaigns for most business models, and what Australian marketers need to get right to make them perform.

Local Campaigns for Multi-Location: Managing Australian Business Chains

Running local marketing campaigns across a business chain in Australia is one of the most nuanced challenges a growing brand will face. What works brilliantly at the national level often falls flat at the suburb level, and vice versa. Australian consumers are savvy and they can tell the difference between a brand that genuinely shows up in their community and one that is simply broadcasting from a head office somewhere else. The businesses that get this right are the ones that understand local search behaviour, invest in location-specific assets, and build systems that let their teams execute locally without losing brand consistency. From Google Business Profiles and localised landing pages to paid campaigns built around individual postcodes, the tools available to operators running multiple locations are powerful but only effective when used with intention and structure. This article breaks down exactly how Australian business chains can manage local campaigns at scale, maintain brand integrity, and consistently outperform smaller competitors in every market they operate in.

Discovery Campaigns Deep Dive: Feed-Based Advertising Strategy

Australian businesses seeking advertising placement beyond traditional search results face opportunities through Google Discovery Campaigns that deliver visually rich ads across YouTube Home Feed, Gmail Promotions tab, and Google Discover feed reaching users during browsing and content consumption rather than active search sessions. Discovery Campaigns operate fundamentally differently from search advertising using automated creative optimisation testing multiple image and headline combinations, feed-based placement appearing native to platform experiences, and audience targeting based on interests and behaviours rather than keyword intent that search campaigns employ. However, Discovery's automated nature provides less control than search campaigns, performance measurement differs substantially from search metrics, and creative quality directly determines campaign success more than any other Google Ads format because visual appeal drives engagement in discovery environments. This comprehensive guide reveals how Maven Marketing Co. helps Australian businesses implement Discovery Campaigns through strategic audience targeting, compelling creative development, systematic testing methodologies, and performance optimisation ensuring feed-based advertising generates qualified traffic and conversions rather than vanity impressions from poorly targeted beautiful ads that users scroll past without engaging.

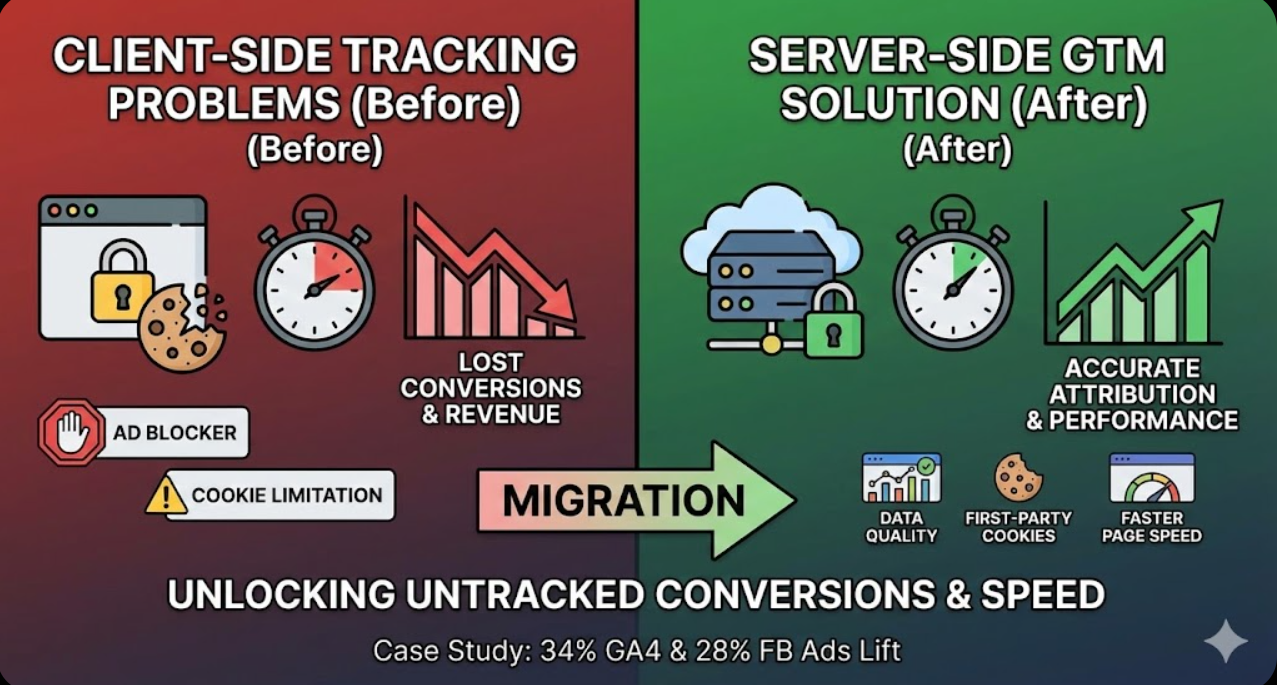

Google Tag Manager Server-Side: Enhanced Conversion Tracking Setup

Australian businesses relying exclusively on client-side Google Tag Manager implementation face mounting challenges where browser-based tracking increasingly fails due to ad blockers preventing tag firing, cookie restrictions limiting conversion attribution, privacy regulations requiring careful data handling, and page performance degradation from excessive JavaScript execution. Server-side Google Tag Manager addresses these limitations by moving tag execution from browsers to secure servers controlled by advertisers, enabling more reliable data collection immune to ad blockers, providing enhanced privacy controls over data transmission, and improving website performance through reduced client-side JavaScript. Implementation requires substantially more technical expertise than client-side setup, introduces hosting costs and infrastructure management responsibilities, and necessitates careful configuration avoiding data loss during migration. The gap between improved tracking accuracy and implementation complexity creates strategic decisions where medium to large Australian businesses with substantial tracking needs benefit whilst smaller businesses with basic requirements may find complexity exceeds advantages. This comprehensive guide reveals how Maven Marketing Co. helps Australian businesses evaluate whether server-side GTM benefits justify implementation complexity, systematic setup procedures, strategic tag migration approaches, and ongoing optimisation ensuring tracking accuracy whilst maintaining performance and privacy advantages.

Offline Conversion Import: Connecting CRM Sales to Google Ads

Australian businesses generating leads through Google Ads that convert to sales offline through phone calls, in-person consultations, sales team follow-up, or extended purchase cycles face attribution challenges where Google Ads optimisation algorithms lack visibility into which clicks ultimately generated revenue versus merely producing leads that never closed. The gap between online lead generation and offline revenue realisation creates situations where campaigns generating highest-quality leads producing substantial revenue appear inefficient based on online conversion data alone, whilst campaigns producing low-quality leads that rarely close appear successful because algorithms optimise toward lead volume rather than downstream sales value that offline conversion import reveals. Offline conversion tracking connects CRM sales data to original Google Ads clicks enabling accurate attribution, automated bidding optimisation toward actual revenue, and comprehensive ROI analysis that businesses making attribution decisions based solely on lead generation metrics cannot achieve. This comprehensive guide reveals how Maven Marketing Co. helps Australian businesses implement offline conversion import through systematic CRM data preparation, Google Ads conversion action configuration, ongoing upload processes, and strategic bidding adjustments ensuring advertising optimisation targets actual business outcomes rather than proxy metrics that imperfectly correlate with revenue.

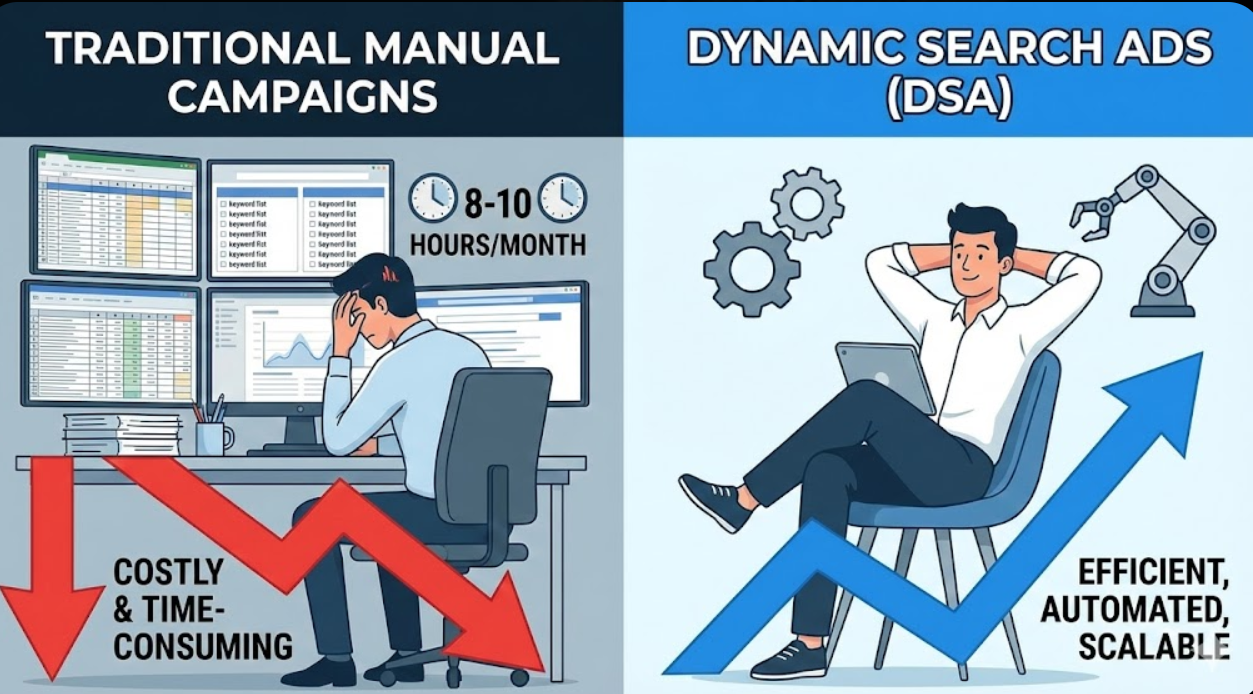

Dynamic Search Ads Evolution: AI-Generated Campaigns for Australian Services

Australian service businesses investing substantial time researching keywords, writing ad copy variations, and managing keyword bidding strategies face an automation alternative where Dynamic Search Ads leverage Google's artificial intelligence to automatically generate ad headlines, match relevant searches, and determine landing pages based on website content rather than requiring manual keyword lists and ad creation. DSAs can discover untapped search queries that keyword research misses, adapt instantly to website content changes without manual campaign updates, and reduce management overhead freeing resources for strategic initiatives. However, automation potentially sacrifices granular control, brand messaging consistency, and budget efficiency that manual campaigns provide through precise targeting. The gap between traditional keyword-based campaign management requiring continuous optimisation and Dynamic Search Ads promises strategic decisions where businesses must balance automation advantages against control trade-offs. This comprehensive guide reveals how Maven Marketing Co. helps Australian service businesses evaluate Dynamic Search Ads through systematic analysis of use cases, implementation strategies combining DSAs with traditional campaigns for optimal coverage, negative keyword strategies preventing wasteful traffic, and performance monitoring ensuring AI-generated campaigns deliver acceptable return on ad spend.

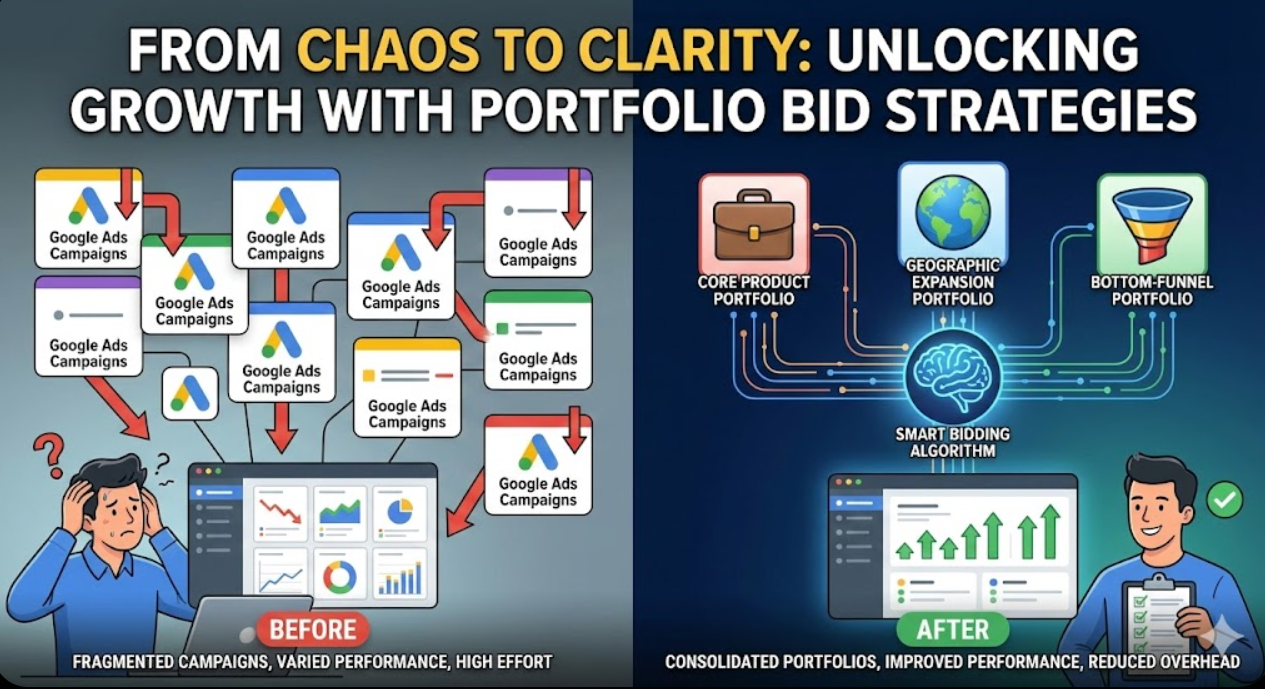

Google Ads Portfolio Bid Strategies: Multi-Campaign Optimisation

Portfolio bid strategies enable applying single automated bidding strategy across multiple campaigns, allowing Google's machine learning algorithms to optimise bids holistically rather than independently per campaign. This approach delivers superior performance for advertisers managing multiple campaigns targeting similar objectives by sharing conversion data across campaigns, enabling more sophisticated optimisation, balancing performance across portfolio, and simplifying management through centralised strategy control. Understanding when portfolio strategies outperform campaign-level bidding, how to group campaigns strategically, setting appropriate performance targets, and monitoring portfolio health ensures automated bidding delivers maximum efficiency across entire account structure.

In-Market Audiences 2026: Behavioural Targeting in Privacy-First Era

Third-party cookie deprecation and privacy regulations transformed audience targeting from identifier-based tracking to privacy-safe behavioural signals and machine learning. In-market audiences in 2026 leverage aggregated search patterns, anonymised browsing behaviour, first-party data, and contextual signals to identify purchase-ready prospects without individual tracking. Understanding how modern in-market audiences function, implementing privacy-compliant targeting strategies, combining multiple signal sources, optimising for signal quality, and measuring effectiveness in privacy-first environments enables effective audience targeting maintaining performance whilst respecting user privacy and regulatory requirements.

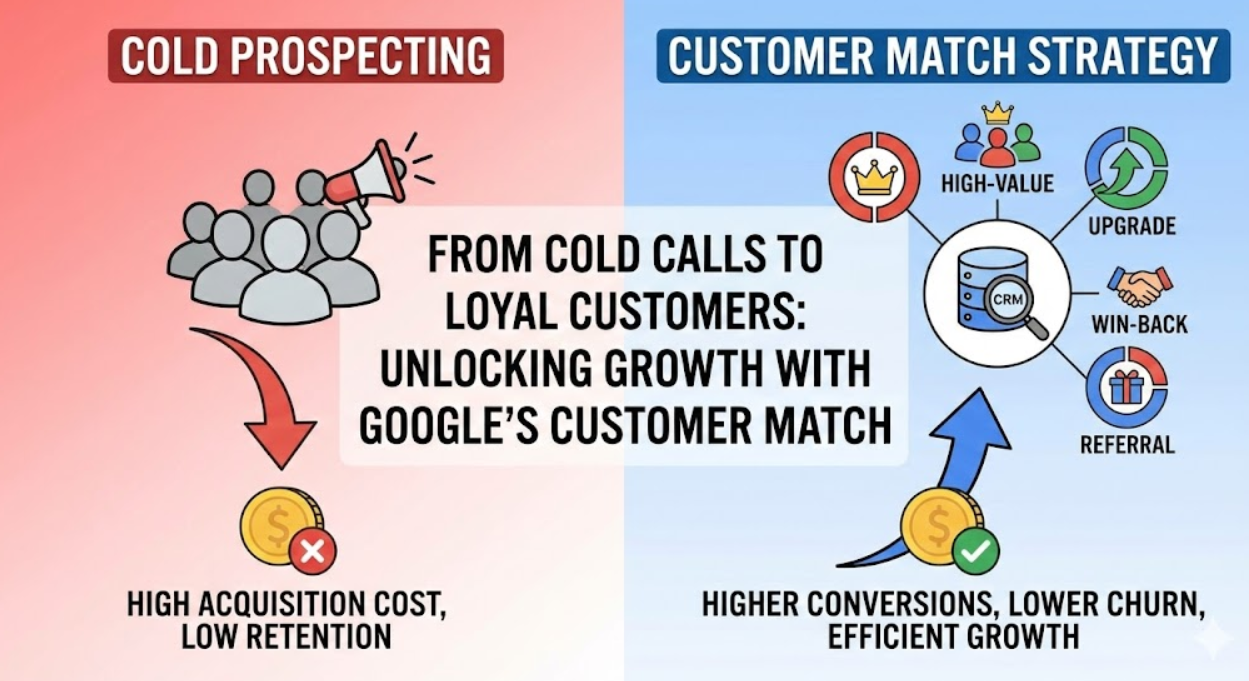

Customer Match Campaigns: First-Party Data Activation in Google Ads

Customer Match enables uploading first-party customer data including email addresses, phone numbers, and physical addresses into Google Ads for precision audience targeting across Search, Shopping, Display, YouTube, and Discovery campaigns. This powerful capability transforms existing customer relationships and CRM data into targetable audiences, enabling personalised messaging for existing customers, exclusion strategies preventing wasted spend, lookalike audience expansion reaching similar prospects, and lifecycle-based targeting matching offers to customer journey stages. Understanding data requirements, implementing strategic segmentation, optimising bidding approaches, maintaining privacy compliance, and measuring incremental impact unlocks substantial value from owned customer data.