Key Takeaways

- Sitemap segmentation improves management and monitoring: Dividing large websites into multiple targeted sitemaps by content type, update frequency, or business priority enables precise tracking of indexation rates and targeted resubmission when content changes

- Size and URL limits require strategic planning: Individual sitemap files must contain fewer than 50,000 URLs and remain under 50MB uncompressed, necessitating sitemap index files coordinating multiple sitemaps for large sites

- Priority and change frequency signals provide guidance: Whilst not direct ranking factors, priority values (0.0 to 1.0) and change frequency declarations help search engines understand relative importance and optimal crawl scheduling

- Dynamic generation ensures accuracy: Automated sitemap generation from databases or CMS platforms maintains current URL lists whilst manual maintenance becomes impractical at scale

- Last modification dates trigger recrawling: Accurate lastmod timestamps inform search engines which pages changed since last crawl, optimising crawl efficiency and ensuring timely reindexing of updated content

- Image and video sitemaps enhance media discovery: Specialized sitemap formats provide additional metadata about images and videos, improving their discovery and potential appearance in image and video search results

- News sitemaps accelerate timely content indexing: News-specific sitemap protocols enable rapid discovery of time-sensitive content, particularly valuable for publishers and media sites

- Regular monitoring identifies indexation problems: Tracking submitted versus indexed URLs through Google Search Console reveals technical issues, content quality problems, or crawl budget constraints preventing indexation

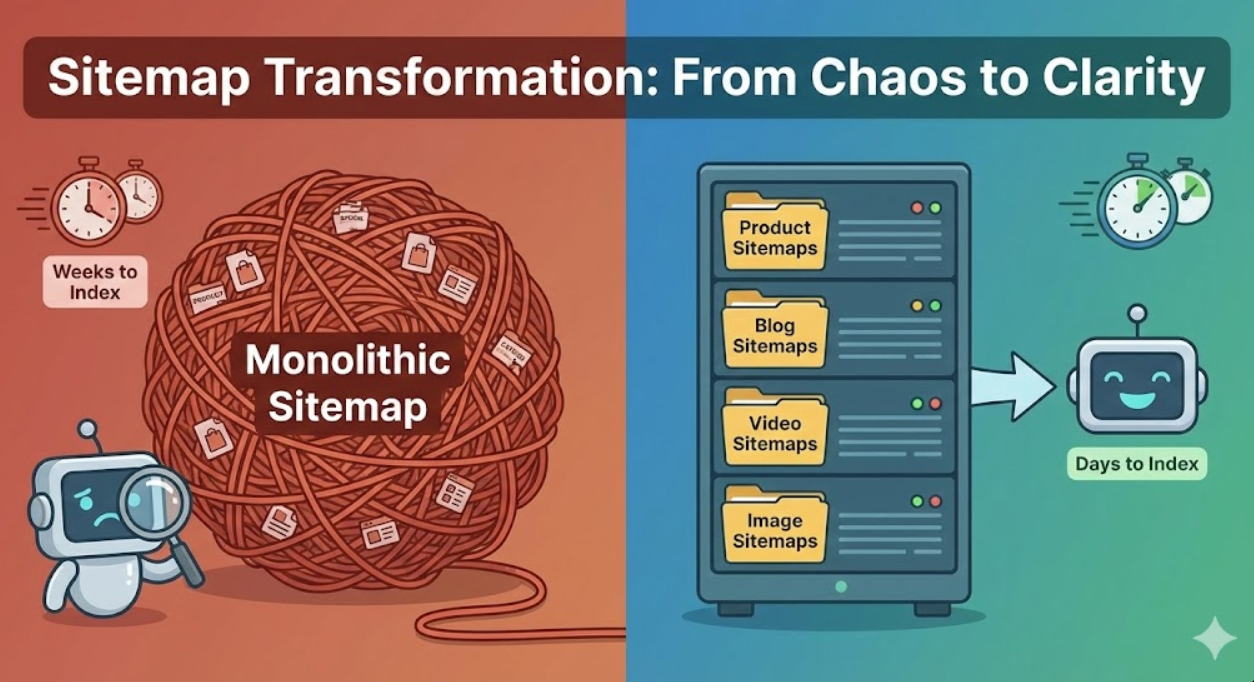

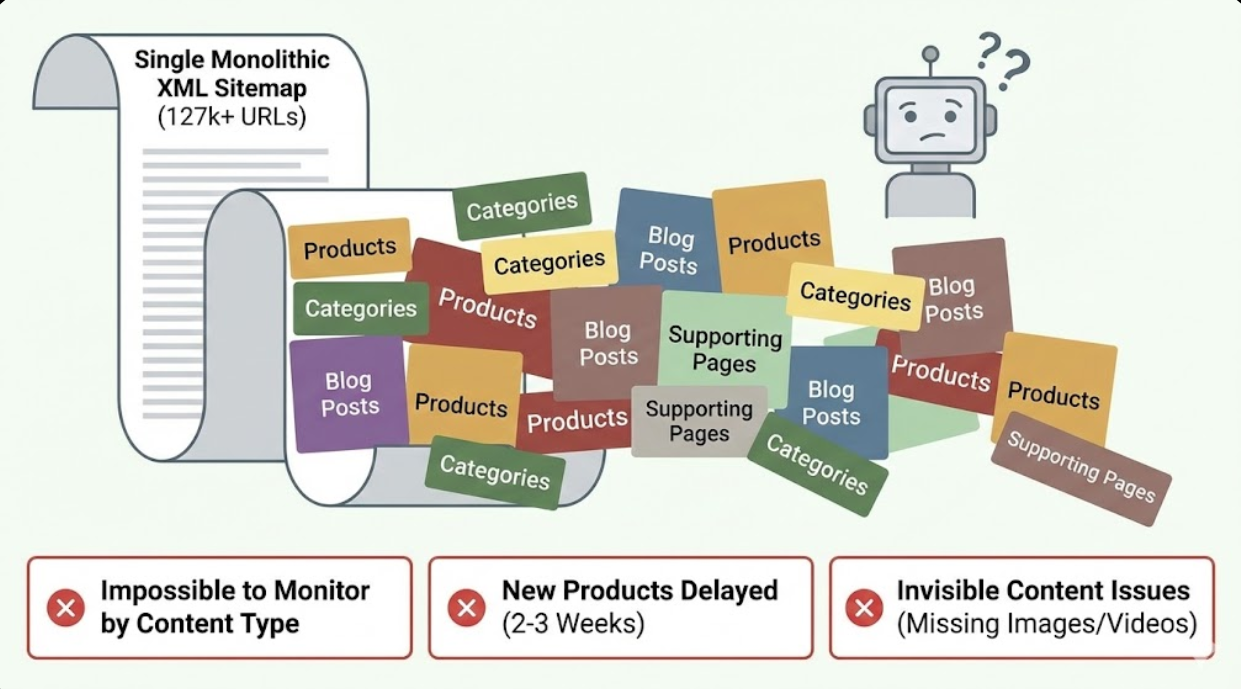

A national e-commerce retailer operating across Australia managed 127,000 product pages, 8,400 category pages, 2,300 blog posts, and various supporting pages through a single massive XML sitemap. Monitoring indexation proved impossible as they couldn't distinguish which content types Google struggled to index. New products took weeks to appear in search results despite being added to the sitemap. The monolithic sitemap made identifying problems like missing images or video content effectively invisible.

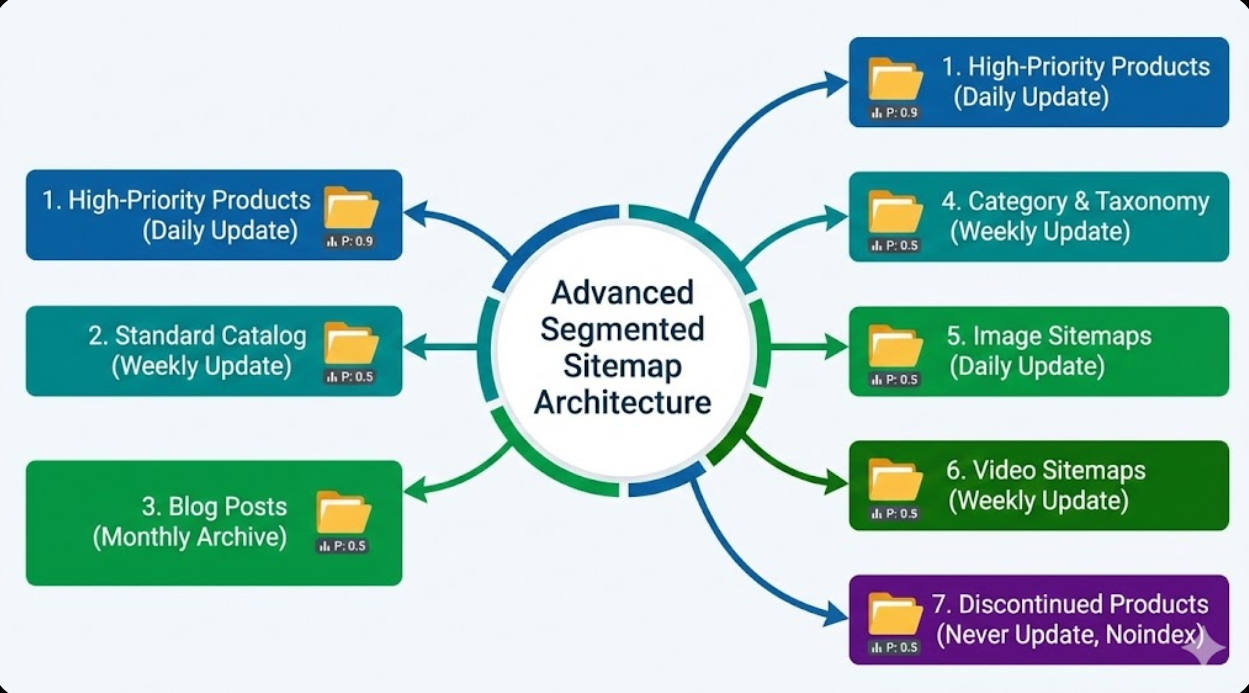

Implementing advanced sitemap architecture transformed their technical SEO. They segmented into focused sitemaps: high-priority products by category, standard catalog products, blog content by publication date, category and taxonomy pages, image sitemaps for product photography, video sitemaps for product demonstrations, and discontinued products marked for removal. Each sitemap received tailored priority values and change frequency settings reflecting actual update patterns.

Results appeared within weeks. Search Console revealed that whilst product sitemaps achieved 94% indexation, discontinued product pages showed only 12% indexation indicating successful crawl budget preservation. New product indexation time dropped from 2-3 weeks to 3-5 days. Blog posts appeared in news results within hours of publication through news sitemap implementation. Most importantly, they could identify and address indexation problems by content type rather than guessing which sections faced issues.

The transformation from single unwieldy sitemap to sophisticated segmented architecture enabled precise monitoring, faster indexation, and data-driven optimisation impossible with basic implementations.

Understanding XML Sitemap Fundamentals

XML sitemaps serve as roadmaps for search engines, listing important URLs whilst providing metadata about each page's priority, update frequency, and relationships to other content.

The XML sitemap protocol follows specific formatting requirements defined by sitemaps.org, an initiative jointly developed by major search engines including Google, Microsoft, and others. According to Google's official sitemap documentation, proper XML structure includes required elements like URL location tags, optional elements like lastmod timestamps and priority values, and proper XML declarations and namespace definitions. Malformed sitemaps get rejected entirely, making technical accuracy essential for functionality.

Individual sitemap limitations require strategic planning for large sites. Each sitemap file must contain fewer than 50,000 URLs and remain under 50MB when uncompressed. These constraints necessitate multiple sitemaps for large Australian websites, coordinated through sitemap index files that reference individual sitemaps. The index file itself can list up to 50,000 sitemaps, enabling management of sites with billions of URLs through hierarchical organisation.

Sitemap discovery mechanisms enable search engines to find sitemaps through multiple methods including robots.txt file references, direct submission through Google Search Console, HTML link tags in page head sections, and automatic discovery through crawling. Most large sites use robots.txt references combined with Search Console submission ensuring reliable discovery whilst enabling monitoring through Search Console's sitemap tools.

The relationship between sitemaps and crawling recognises that sitemaps don't guarantee crawling or indexation but rather inform search engines about URL existence and relative importance. Search engines still apply crawl budget constraints, quality thresholds, and duplicate content filtering regardless of sitemap inclusion. Sitemaps improve discovery efficiency but don't override fundamental indexation criteria or crawl limitations.

Common misconceptions about sitemaps include believing that sitemap inclusion guarantees indexation, thinking priority values directly influence rankings, expecting change frequency to dictate actual crawl schedules, or assuming sitemaps can force indexation of low-quality content. Understanding sitemaps as discovery tools rather than ranking signals or indexation guarantees sets appropriate expectations for their role in technical SEO.

Australian website considerations include proper URL formatting with full absolute URLs including protocol and domain, accurate timezone handling for lastmod timestamps, and compliance with international standards whilst serving primarily Australian audiences. Geographic targeting happens through other signals like hreflang tags, server location, and content, not through sitemap architecture.

Strategic Sitemap Segmentation Approaches

Segmenting large websites into multiple targeted sitemaps enables precise monitoring, optimisation, and management impossible with monolithic single-file approaches.

Content type segmentation divides URLs by fundamental content categories creating focused sitemaps for products, categories, blog posts, location pages, informational content, and user-generated content. This organisation enables tracking indexation rates by content type, identifying which sections face indexation challenges, adjusting crawl priorities by content importance, and implementing content-specific optimisation strategies. E-commerce sites might maintain separate product sitemaps for active inventory, categories and filters, blog content, and customer reviews.

Update frequency segmentation groups content by how often it changes, creating sitemaps for frequently updated content like news or stock prices, regularly updated content like product pages or category listings, occasionally updated content like informational guides, and static content like company information or legal pages. This segmentation enables efficient crawl budget usage by signalling which content warrants frequent recrawling versus occasional verification.

Priority-based segmentation organises URLs by business importance rather than update frequency. High-priority sitemaps contain revenue-driving pages like bestselling products, high-margin items, and primary service pages. Medium-priority sitemaps include standard catalog content and supporting pages. Low-priority sitemaps cover supplementary content and legacy pages. This organisation enables search engines to understand relative importance when allocating crawl resources.

Publication date segmentation particularly benefits content-heavy sites by organising content into monthly or quarterly sitemaps. Blog posts from January 2024 live in one sitemap whilst February 2024 content occupies another. This approach enables archival sitemap stability whilst maintaining focused sitemaps for recent content. Historical sitemaps rarely need updates whilst current sitemaps receive frequent modifications reflecting ongoing publishing.

Geographic or language segmentation organises multilingual or multi-region sites by language or region. English content occupies one sitemap set, French content another. This organisation simplifies management for international sites whilst enabling language-specific monitoring and optimisation. Hreflang relationships should be maintained separately from sitemap segmentation through proper on-page implementation.

Business unit or brand segmentation suits large organisations managing multiple brands or business divisions under unified technical infrastructure. Each brand or division maintains dedicated sitemaps enabling independent monitoring and optimisation whilst sharing underlying infrastructure. This approach particularly benefits Australian retail groups operating multiple store brands or publishers managing multiple publication properties.

Hybrid segmentation combines multiple approaches creating sophisticated architectures matching complex site requirements. A large retailer might segment by content type (products, blog, categories) then further subdivide product sitemaps by update frequency (active, seasonal, archive) and priority (featured, standard, clearance). This multi-dimensional organisation provides granular control whilst maintaining manageable structure.

Technical Implementation Best Practices

Proper technical implementation ensures sitemaps meet protocol requirements whilst optimising for search engine processing and indexation efficiency.

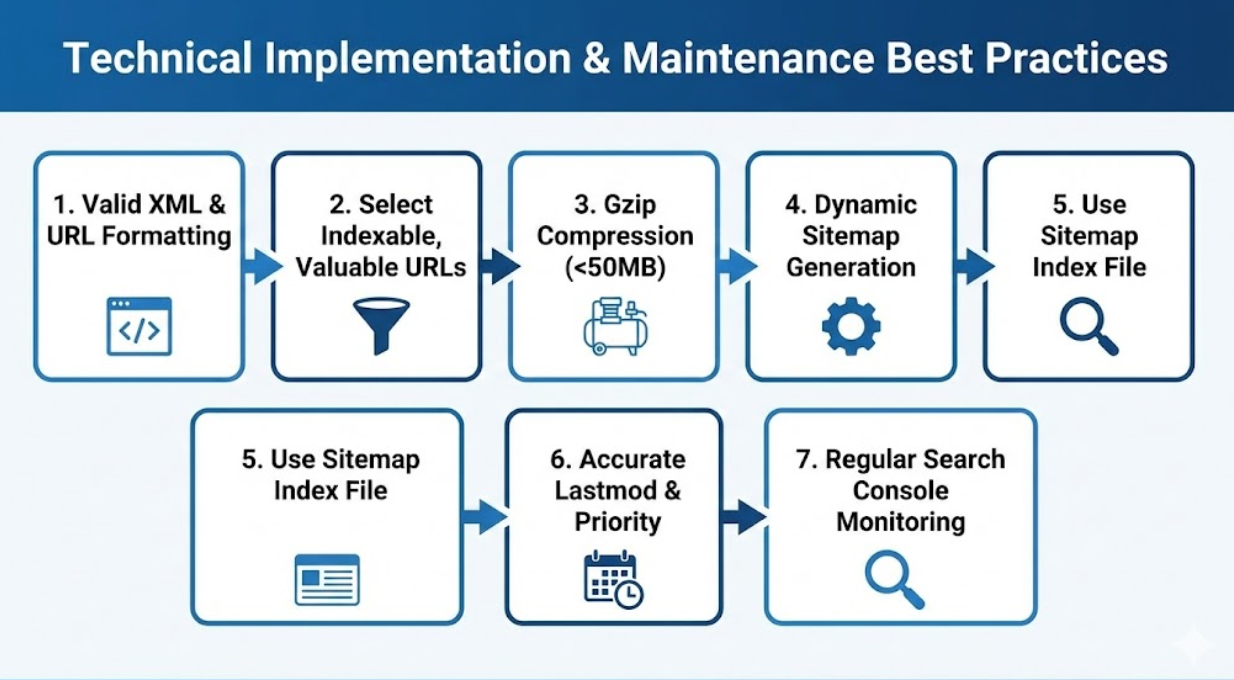

XML formatting requirements demand strict adherence to specifications including proper XML declaration, namespace definition, UTF-8 encoding, and valid URL encoding. According to best practices from Moz's comprehensive sitemap guide, common formatting errors include invalid characters in URLs, missing namespace declarations, incorrect date formats, and malformed XML structure. Validation tools like XML validators or Google Search Console's sitemap testing feature identify formatting errors before submission.

URL selection criteria determine which pages warrant sitemap inclusion versus exclusion. Include indexable, valuable URLs like product pages, category pages, blog posts, and important informational content. Exclude duplicate content, parameterized URLs creating filtering variations, paginated pages beyond page 1 unless implementing pagination correctly, and low-quality pages like search result pages or user account sections. Conservative URL selection focusing on genuinely valuable content prevents sitemap bloat whilst ensuring quality signals.

Compression using gzip reduces file sizes enabling larger URL counts within 50MB limits and faster transfer speeds. Most web servers support automatic gzip compression for XML files. Compressed sitemaps must maintain .xml.gz extension enabling search engines to recognise and decompress automatically. Compression typically reduces sitemap sizes by 80-90% allowing more URLs per file whilst accelerating downloads.

Dynamic sitemap generation automates URL list maintenance through database queries, CMS plugins or modules, custom scripts accessing content APIs, or dedicated sitemap generation services. Manual sitemap maintenance becomes impractical for large sites or frequently updated content. Dynamic generation ensures accuracy whilst reducing maintenance burden. Most modern CMS platforms provide sitemap generation functionality either natively or through plugins.

Caching strategies balance freshness with performance by caching generated sitemaps for periods matching update frequency, invalidating cache when content changes, and pre-generating sitemaps during off-peak periods for large sites. Heavy traffic sites serving sitemaps from cache rather than generating on-demand prevents performance degradation from repeated sitemap requests. Cache duration should align with actual content update patterns.

Sitemap index file implementation coordinates multiple sitemaps through master index referencing individual sitemaps, hierarchical organisation using sub-indexes for complex structures, and proper URL references using absolute URLs to sitemap locations. The index file structure mirrors individual sitemap XML format whilst listing sitemap locations rather than page URLs. Large sites might implement nested index structures for additional organisational complexity.

Change notification mechanisms alert search engines to sitemap updates through Search Console sitemap resubmission, HTTP pinging of search engine ping URLs with sitemap location, and automatic notification through CMS integrations. Resubmission after significant content additions or structural changes ensures timely discovery. However, excessive resubmission without actual changes can be counterproductive, so trigger notifications only when meaningful updates occur.

Optimising Priority and Change Frequency Values

Strategic use of priority and change frequency declarations helps search engines understand relative importance and optimal crawl patterns despite these signals not being direct ranking factors.

Priority value strategy uses the 0.0 to 1.0 scale indicating relative importance within your site rather than absolute importance across the web. Setting homepage and key landing pages to 1.0, important category and product pages to 0.8, standard content pages to 0.5, and supporting pages to 0.3 creates clear importance hierarchy. Avoid setting everything to 1.0 as this dilutes signal effectiveness. Priority indicates which pages warrant preferential crawling when crawl budget constraints exist.

Change frequency declarations include values like always, hourly, daily, weekly, monthly, yearly, and never, suggesting optimal recrawl intervals. Set frequently updated content like news or stock prices to daily or hourly, regularly updated product pages to weekly, stable content like guides to monthly, and static pages like about sections to yearly. These suggestions help search engines optimise crawl scheduling though they don't guarantee specific crawl frequencies.

The reality of priority and frequency impact requires understanding that whilst Google considers these values, they're treated as hints rather than directives. According to Google's Search Central blog on sitemaps, actual crawl frequency depends on discovered update patterns, crawl budget allocation, and content popularity more than declared frequency values. However, accurate declarations aligned with actual patterns help search engines optimise crawling efficiency.

Lastmod timestamp accuracy matters more than priority or frequency as search engines use modification dates to identify changed content warranting recrawl. Accurate lastmod values enable efficient recrawling by informing crawlers which pages actually changed since last visit. Inaccurate timestamps that change without actual content modifications waste crawl budget whilst missing actual updates delays reindexing. Lastmod should reflect genuine content changes not template updates or minor modifications.

Avoiding common mistakes includes not setting every page to priority 1.0 defeating the relative importance signal, declaring unrealistic change frequencies misaligned with actual update patterns, updating lastmod values when content doesn't actually change, and omitting lastmod entirely when accurate timestamps are available. Thoughtful, accurate signal implementation provides more value than automated generic values across all URLs.

Segment-specific optimisation tailors priority and frequency values by sitemap rather than site-wide defaults. High-priority product sitemaps might use higher average priority values than standard catalog sitemaps. News sitemaps use hourly or daily change frequency whilst archive sitemaps use monthly or yearly. This segmented approach provides more accurate signals than one-size-fits-all values.

Specialised Sitemap Types and Extensions

Beyond basic XML sitemaps, specialised formats address specific content types requiring additional metadata or accelerated discovery.

Image sitemaps enhance image discovery and indexing by providing image-specific metadata including image location URLs, captions, titles, geographic locations, and license information. According to Google's guidelines, image sitemaps can include up to 1,000 images per URL, useful for galleries or product pages with multiple images. Implementing image sitemaps improves image search visibility particularly important for e-commerce and visual content sites. Image metadata helps search engines understand image content and context improving relevance matching.

Video sitemaps provide video-specific metadata including video file locations, thumbnail URLs, titles, descriptions, durations, view counts, and publication dates. Video sitemaps significantly improve video discovery and potential inclusion in video search results. Required metadata includes title and description whilst optional fields like view count and family-friendly indicators provide additional context. Australian content creators and businesses using video marketing benefit substantially from proper video sitemap implementation.

News sitemaps accelerate discovery of timely content through news-specific protocol supporting rapid indexing and inclusion in Google News. News sitemaps include publication name, article title, publication date, and keywords whilst limiting content age to 2 days for inclusion. Publishers regularly updating news sitemaps throughout the day enable near-real-time discovery of breaking news and timely content. This specialized format proves essential for news publishers and media sites requiring immediate indexation.

Mobile sitemaps historically designated mobile-specific URLs but have largely been deprecated with responsive design becoming standard. Sites using separate mobile URLs (m.example.com) historically used mobile sitemaps though responsive design eliminating URL variations makes mobile sitemaps unnecessary for most modern sites. Focus on responsive design and proper mobile optimisation rather than mobile-specific sitemaps for new implementations.

Alternate language sitemaps for multilingual sites can indicate hreflang relationships within sitemap structure as alternative to on-page implementation. Whilst on-page hreflang implementation remains preferred, sitemap-based hreflang provides centralised management for complex multilingual sites. This approach requires careful implementation ensuring bidirectional relationships and proper ISO language-region codes.

Extension and custom protocols enable additional metadata through namespace extensions for specific purposes like PageMap annotations, custom change frequency definitions, or proprietary metadata. Whilst major search engines support standard extensions like image and video sitemaps, custom extensions should be implemented cautiously ensuring compatibility with target search engines.

Monitoring and Maintaining Sitemap Performance

Systematic monitoring reveals indexation efficiency, identifies technical problems, and guides optimisation efforts through data-driven insights.

Google Search Console sitemap reports provide primary monitoring interface showing submitted URLs versus indexed URLs, sitemap parsing errors and warnings, last read timestamp indicating when Google accessed the sitemap, and individual URL status for debugging. Significant gaps between submitted and indexed URLs indicate problems warranting investigation including content quality issues, duplicate content, crawl budget constraints, or technical indexation barriers.

Coverage analysis comparing different sitemaps reveals which content types index efficiently versus struggling. A product sitemap showing 95% indexation whilst a blog sitemap shows 40% indexation suggests content quality or optimisation differences warranting attention. This comparative analysis identifies optimisation priorities focusing efforts on underperforming content categories.

Error identification through sitemap reports reveals specific problems including parsing errors from malformed XML, URL errors from incorrect formatting or inaccessible URLs, and compression errors from corrupted gzip files. Search Console provides detailed error messages guiding remediation. Regular monitoring catches errors quickly preventing extended periods of dysfunctional sitemap submission.

Indexation velocity tracking measures how quickly newly added URLs get indexed after sitemap submission. Comparing publication date to indexation date reveals typical lag times. Excessive delays suggest crawl budget constraints, low content priority, or technical barriers requiring optimisation. Rapid indexation validates effective sitemap implementation and content value.

Resubmission protocols balance keeping search engines informed with avoiding excessive submissions. Resubmit after major content updates, structural changes affecting multiple URLs, or technical fixes addressing previous errors. Avoid resubmitting unchanged sitemaps as this wastes search engine resources without providing value. Many CMS platforms automatically notify search engines of sitemap updates through ping mechanisms eliminating manual resubmission requirements.

Regular audits verify sitemap accuracy by crawling sites comparing discovered URLs to sitemap contents, checking for broken links or redirect chains in sitemap URLs, validating lastmod timestamp accuracy, and ensuring priority and frequency values align with actual patterns. Quarterly audits identify drift between sitemap declarations and site reality maintaining sitemap reliability.

Automated monitoring alerts notify when indexation rates drop significantly, sitemap errors appear in Search Console, submitted URL counts change unexpectedly, or technical issues prevent sitemap access. Proactive alerts enable rapid response preventing minor issues from becoming major problems.

Common Sitemap Mistakes and How to Avoid Them

Understanding typical mistakes helps Australian website operators avoid costly errors that compromise sitemap effectiveness and indexation efficiency.

Including noindexed or blocked URLs in sitemaps sends conflicting signals where sitemaps suggest pages warrant indexation whilst robots meta tags or robots.txt indicate otherwise. Sitemaps should only include URLs intended for indexation. Exclude URLs with noindex tags, pages blocked by robots.txt, and content requiring authentication. Mixed signals confuse search engines reducing sitemap credibility.

Submitting overly large sitemaps exceeding 50,000 URLs or 50MB uncompressed triggers rejection or partial processing. Monitor sitemap sizes staying well below limits with buffer for growth. Split large sitemaps when approaching thresholds rather than exceeding limits. Compressed sitemaps can contain more URLs within size constraints using gzip compression.

Neglecting lastmod timestamps or using inaccurate values prevents efficient recrawling. When lastmod timestamps update without actual content changes, search engines waste resources recrawling unchanged content. When timestamps don't update despite content changes, reindexing delays hurt timely content. Implement accurate timestamp tracking reflecting genuine content modifications.

Ignoring sitemap errors in Search Console allows problems to persist degrading indexation over time. Regular review of sitemap reports identifies issues requiring attention. Addressing errors promptly maintains sitemap functionality and search engine trust. Persistent unresolved errors may lead search engines to deprioritize sitemap content.

Creating excessive sitemap segmentation generates management overhead without proportional benefits. Whilst segmentation provides value, excessive granularity creates dozens of sitemaps requiring individual monitoring and maintenance. Balance granularity with practical management capabilities. Most sites function well with 5-15 sitemaps rather than 50+ micro-segmented files.

Using relative URLs instead of absolute URLs violates protocol requirements as sitemaps must contain fully qualified URLs including protocol (https://) and domain. Relative URLs like /products/item.html get rejected. Always use absolute URLs like https://example.com/products/item.html in sitemap implementations.

Forgetting sitemap index files when implementing multiple sitemaps prevents coordinated submission and monitoring. The sitemap index acts as master coordinator ensuring search engines discover all individual sitemaps. Submitting individual sitemaps separately without index file creates management complexity and potential discovery gaps.

Ready to Optimise Your Website's Sitemap Architecture?

XML sitemap architecture represents foundational technical SEO infrastructure enabling efficient search engine discovery and indexation at scale. For large Australian websites managing thousands or tens of thousands of pages, strategic sitemap segmentation, accurate metadata, and systematic monitoring transform sitemaps from basic URL lists into sophisticated tools guiding search engine behaviour.

Proper sitemap implementation combined with ongoing monitoring and optimisation ensures your valuable content gets discovered, crawled, and indexed efficiently whilst providing data-driven insights into indexation performance across content types and categories.

Need expert guidance implementing advanced sitemap architecture for your large-scale website? Maven Marketing Co. specialises in technical SEO for complex Australian websites. Our team implements comprehensive sitemap strategies from architecture planning through dynamic generation, Search Console configuration, and ongoing monitoring ensuring optimal indexation efficiency.

We don't just generate basic sitemaps. We develop sophisticated segmented architectures tailored to your content types, business priorities, and technical infrastructure whilst implementing monitoring systems providing ongoing visibility into indexation performance.

Contact Maven Marketing Co. today for a comprehensive sitemap architecture audit. We'll analyse your current implementation, identify optimisation opportunities, and develop detailed roadmap for advanced sitemap architecture maximising search engine discovery and indexation efficiency. Let's ensure search engines efficiently discover and index your valuable content through optimised sitemap infrastructure.

Frequently Asked Questions

Q: How many URLs should we include in each XML sitemap file, and when should we split into multiple sitemaps?

Individual sitemap files must contain fewer than 50,000 URLs and remain under 50MB uncompressed. However, best practice suggests staying well below these limits, typically 30,000-40,000 URLs per sitemap, providing buffer for growth whilst maintaining manageable file sizes. Split into multiple sitemaps when approaching these limits or when logical content segmentation improves monitoring and management. Content-based splitting (products vs blog vs categories) provides more value than arbitrary size-based splitting, enabling targeted tracking and optimisation by content type.

Q: Do priority values and change frequency declarations actually influence how often Google crawls our pages?

Priority and change frequency serve as hints rather than directives, with Google treating them as suggestions while ultimately determining crawl frequency based on discovered update patterns, crawl budget allocation, and content popularity. Accurate declarations aligned with actual patterns help optimise crawling efficiency, but don't guarantee specific crawl schedules. Focus on accurate lastmod timestamps indicating genuine content changes, as these provide more reliable signals than priority or frequency values. Use priority to indicate relative importance within your site and change frequency to suggest typical update intervals matching reality.

Q: Should Australian websites include every single page in XML sitemaps, or are there pages better left out?

Include only indexable, valuable pages in XML sitemaps while excluding low-quality content, duplicate pages, parameterized URLs, noindexed pages, and content behind authentication. Sitemaps should list pages you want search engines to discover and index, not every possible URL. Exclude admin areas, user account pages, search result pages, filter combinations, paginated pages beyond page 1 (unless implementing view-all), and thin content pages. Quality over quantity ensures sitemaps signal valuable content rather than overwhelming search engines with low-value URLs that waste crawl budget and dilute importance signals.