Key Takeaways

- Pagination creates legitimate multiple-page sequences for user navigation but requires careful SEO implementation to prevent search engines from treating paginated pages as duplicate content competing against each other

- Google deprecated rel="next" and rel="prev" tags in 2019 but still uses them as hints for understanding pagination relationships, making proper implementation valuable despite not being officially required

- View-all pages consolidating complete paginated sequences into single URLs provide search engine-friendly alternatives whilst paginated versions serve users preferring page-by-page navigation

- Excessive pagination extending beyond necessary page counts wastes crawl budget as search engines discover and crawl hundreds of pagination pages containing progressively less valuable content

- Canonical tag implementation on paginated sequences requires strategic decisions between self-referencing canonicals treating each page as unique content versus consolidating all pages to page 1 or view-all alternatives

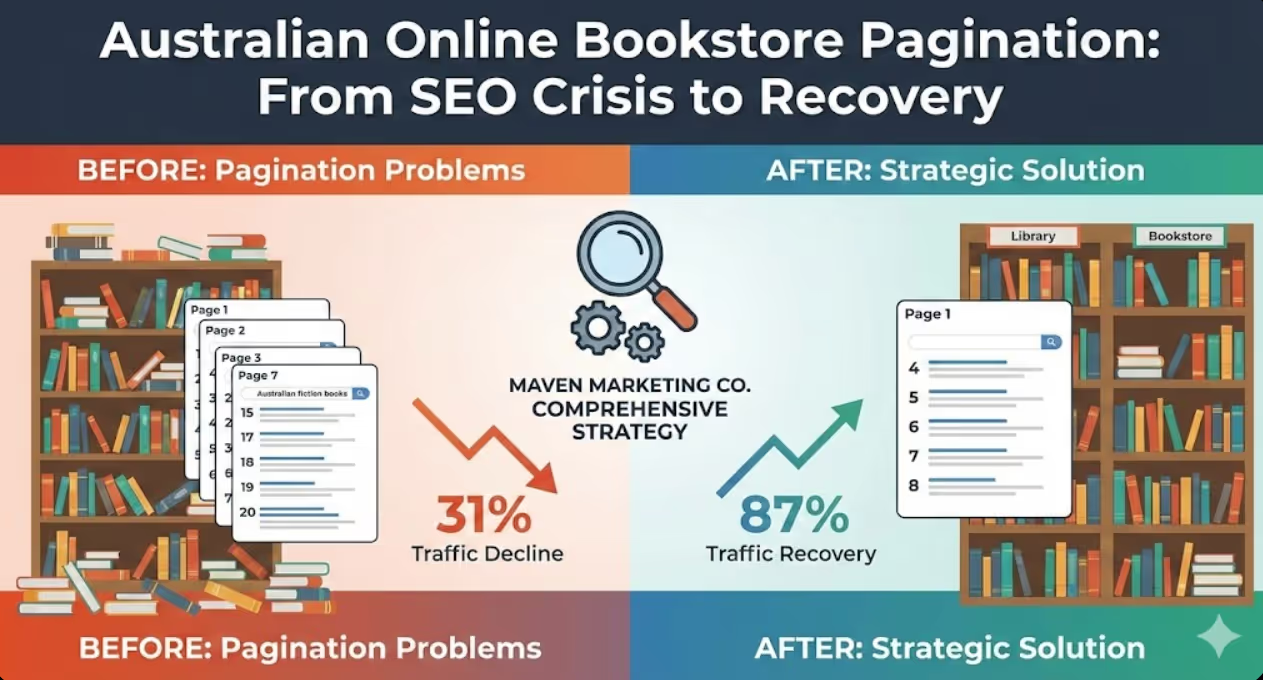

An Australian online bookstore with 45,000 titles organised their catalogue into category pages displaying 24 books per page, creating pagination sequences extending 50+ pages deep for popular categories. The implementation followed basic pagination practices including page number parameters, previous/next navigation links, and proper internal linking structure. The marketing team considered pagination a solved technical problem requiring no ongoing attention.

Twelve months later, organic traffic analysis revealed concerning patterns. Category pages that previously ranked positions 3-5 for target keywords now appeared in positions 15-20 with multiple paginated variations competing in search results. Searches for "Australian fiction books" returned the main category page, page 2, page 3, and page 7 all ranking simultaneously across positions 12-22 rather than presenting single strong result in top positions. Overall category page traffic had declined 31% despite no content quality degradation or backlink losses.

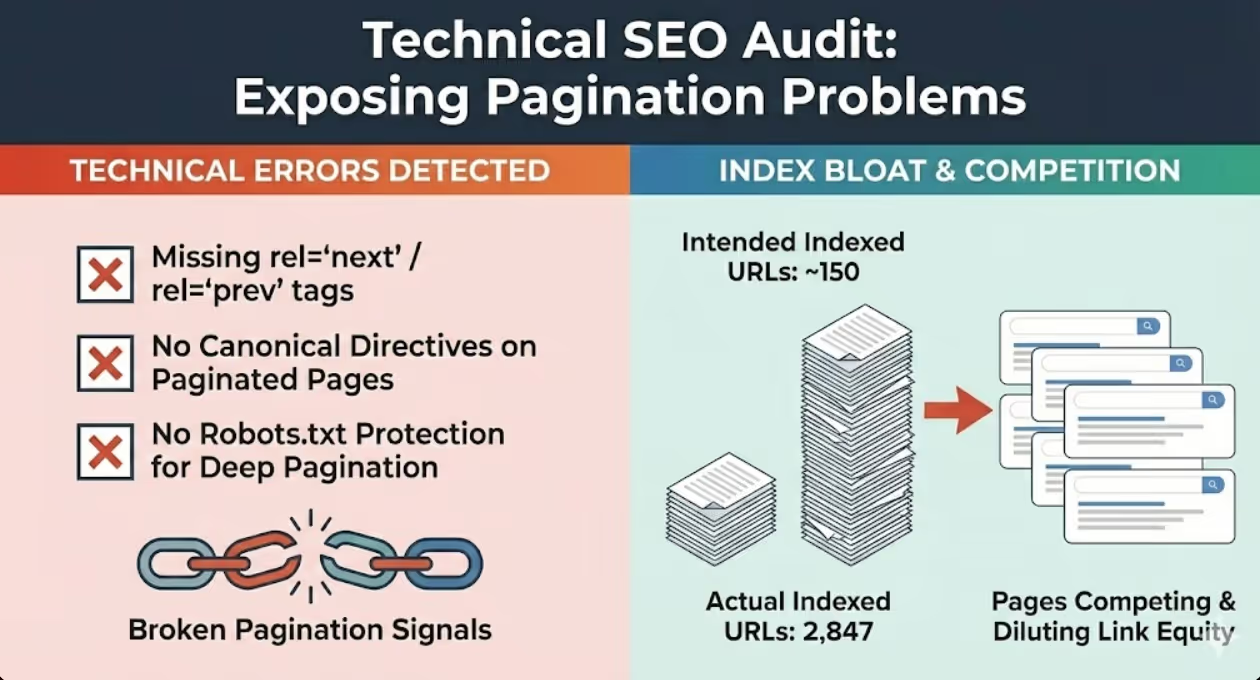

Technical SEO audit exposed the pagination problems. The site had implemented no rel="next" or rel="prev" tags, no canonical directives on paginated pages, and no robots.txt protection against excessive pagination crawling. Search Console showed Google had indexed 2,847 pagination URLs from category sequences that should have presented approximately 150 meaningful category landing pages. Paginated pages were competing against each other for rankings whilst diluting link equity across multiple URLs rather than consolidating authority.

According to research from Google, proper pagination implementation significantly affects how search engines crawl, index, and rank paginated content, with misconfigured pagination leading to crawl budget waste and ranking signal fragmentation.

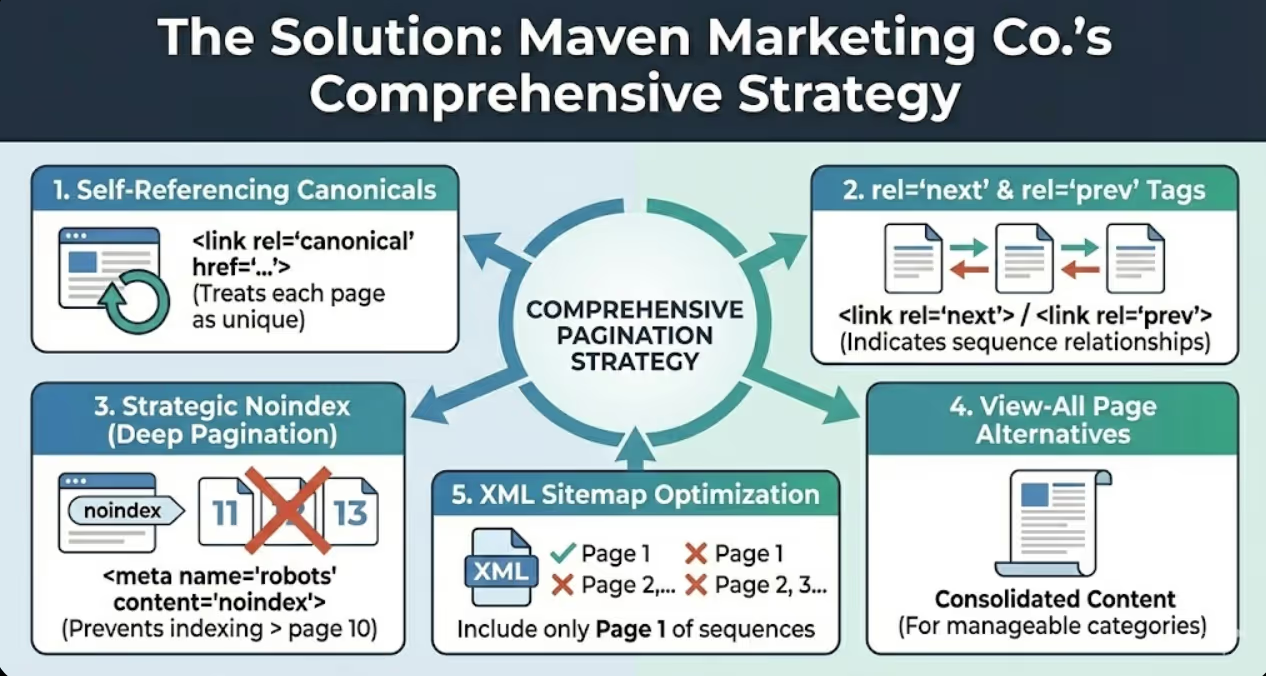

Maven Marketing Co. implemented comprehensive pagination strategy including self-referencing canonical tags on all paginated pages treating each as unique content worthy of individual indexing, rel="next" and rel="prev" tags indicating pagination sequence relationships despite Google's deprecation, strategic noindex on pagination beyond page 10 preventing indexing of deep pagination containing progressively less relevant content, view-all page alternatives for categories with manageable product counts, and XML sitemap optimisation including only page 1 of each sequence whilst excluding deep pagination.

Within eight weeks, indexed pagination URLs decreased from 2,847 to 743 as search engines deindexed deep pagination whilst maintaining first few pages of each sequence. Keyword rankings consolidated with category page 1 URLs recovering positions 4-8 for primary category keywords whilst paginated variations stopped competing. Category page organic traffic recovered 87% of the previous decline within twelve weeks as ranking signal consolidation restored visibility.

The transformation required understanding pagination's dual nature serving user experience needs whilst creating search engine challenges that proper technical implementation resolves.

Understanding Pagination and Search Engine Challenges

Effective pagination SEO requires foundational understanding of what pagination accomplishes, why it exists, and what specific problems it creates for search engine crawling and indexing.

Pagination purpose addresses user experience challenges when displaying hundreds or thousands of items in single pages would create unusably long scrolling experiences, slow page load times from rendering excessive content, and overwhelming information density preventing users from finding relevant items. Pagination breaks content into manageable sequential pages typically displaying 10-50 items per page depending on content type, enabling faster page loads through reduced content rendering, providing clear navigation through numbered page links and previous/next buttons, and improving usability by presenting digestible content segments rather than endless scrolling.

Pagination types vary by implementation approach with different SEO implications. Parameter-based pagination using ?page=2 or &p=3 query strings represents the most common implementation creating distinct URLs for each page in sequences. Path-based pagination using /category/page/2/ or /category-p2/ URL structures embeds page numbers in URL paths rather than parameters. AJAX-based pagination loads additional content dynamically without URL changes, avoiding pagination URLs entirely but creating different SEO challenges around content discoverability. Infinite scroll implementations automatically load next page content when users scroll to bottom, providing seamless experience whilst creating indexing challenges because content lacks distinct URLs. Load-more buttons require user interaction triggering content loading, similar to infinite scroll in creating content without dedicated URLs.

Search engine pagination challenges emerge from fundamental tensions between user experience benefits and crawling/indexing complexities. Duplicate content concerns arise when paginated pages contain overlapping content including repeated headers, footers, navigation, and descriptions differing only in the specific items displayed per page. Crawl budget waste occurs when search engines discover and crawl hundreds of pagination pages in sequences extending far beyond necessary depths consuming resources better allocated to unique content pages. Ranking signal dilution happens when backlinks, authority, and engagement metrics distribute across multiple paginated URLs rather than consolidating to single strong pages. Content discovery problems emerge when important content buried on pagination page 15 or 20 receives inadequate crawling attention compared to items on page 1 or 2. Keyword cannibalization results when multiple paginated pages from same sequence rank for identical queries competing against each other rather than presenting unified strong results.

Historical Google guidance evolution reflects changing approaches to pagination SEO requiring understanding of deprecated versus current recommendations. Google originally recommended rel="next" and rel="prev" link annotations indicating pagination sequence relationships, view-all pages as preferred alternatives consolidating paginated content, and avoiding canonicalisation from paginated pages to page 1 which hides content from indexing. In March 2019, Google announced that rel="next" and rel="prev" tags were deprecated and would no longer be used as indexing signals, creating confusion about optimal pagination strategies. However, subsequent Google communications clarified that whilst not required, rel="next" and rel="prev" tags still function as helpful hints about pagination relationships making their implementation still valuable despite deprecation.

Modern pagination best practices emphasise flexibility rather than single prescribed approach recognising that optimal solutions vary by content type, pagination depth, and business priorities. Self-referencing canonical tags on paginated pages treat each page as unique indexable content avoiding content hiding whilst preventing duplicate content penalties. Strategic use of rel="next" and rel="prev" tags provides pagination relationship signals despite official deprecation. View-all page alternatives offer search engine-friendly consolidated content whilst maintaining paginated navigation for users preferring sequential browsing. Pagination limits prevent infinite sequences by implementing maximum page counts or item-per-page controls. Google's pagination documentation confirms that multiple valid approaches exist for pagination implementation depending on specific website requirements and content characteristics.

Australian e-commerce pagination considerations include unique factors affecting local businesses. Product catalogue sizes varying dramatically between small boutiques with hundreds of items versus large retailers with hundreds of thousands of products require different pagination strategies scaling appropriately. Mobile-first indexing emphasises mobile pagination experience because Google primarily uses mobile page versions for indexing making mobile pagination implementation critical. Local search competition in Australian markets means pagination inefficiency can disadvantage smaller Australian retailers against international competitors implementing more sophisticated pagination strategies. Seasonal inventory fluctuations common in Australian retail create pagination depth variations requiring flexible implementations accommodating changing catalogue sizes without creating orphaned pagination URLs or indexing problems during low inventory periods.

Rel Next and Prev Tag Implementation

Despite Google's 2019 deprecation announcement, rel="next" and rel="prev" tags continue providing value as pagination hints warranting implementation on Australian websites with significant paginated content.

Tag purpose and syntax communicate pagination sequence relationships to search engines through simple HTML link elements. The rel="prev" tag on page 2 includes <link rel="prev" href="https://example.com.au/category?page=1"> indicating previous page in sequence. The rel="next" tag on page 2 includes <link rel="next" href="https://example.com.au/category?page=3"> indicating following page. Page 1 of sequences includes only rel="next" tag because no previous page exists. Final pages include only rel="prev" tag because no next page follows. Mid-sequence pages include both tags establishing bidirectional relationships that search engines use for understanding content structure.

Implementation requirements ensure tags function correctly as pagination signals. Tags must appear in HTML head section rather than body to be recognised by search engines. URLs in tags must be absolute complete URLs including protocol and domain rather than relative paths preventing resolution ambiguity. Tagged URLs must be accessible and return 200 status codes rather than redirecting or erroring which invalidates pagination signals. Sequences must be logically consistent without gaps, loops, or contradictions where rel="next" points to page N whilst that page's rel="prev" points elsewhere creating conflicting signals. Self-referencing must be avoided where page 2's rel="next" points to page 2 itself creating circular references that search engines cannot interpret meaningfully.

Common implementation mistakes prevent tags from functioning correctly requiring awareness for successful deployment. Missing tags on some pages in sequences create incomplete pagination chains where search engines cannot follow entire sequences. Incorrect URL formatting including using HTTP versus HTTPS, www versus non-www variations, or relative versus absolute paths creates mismatches preventing tag recognition. Template errors where dynamic tag generation fails on specific page numbers due to programming logic bugs. Conflicting signals where tags indicate different pagination structure than actual page content or navigation presents. Forgot implementation where developers add tags to page 1 and 2 during initial development but fail to extend implementation across all pagination pages throughout site.

Post-deprecation value justifies continued implementation despite Google's official position that tags are no longer required. Tags still provide hints helping search engines understand pagination relationships even if not used as strong signals affecting indexing. Bing and other search engines continue officially supporting tags making implementation beneficial for non-Google search visibility. Future-proofing implementation protects against potential Google policy reversals where tags might regain official support. Documentation value helps developers and SEO auditors understand site structure through examining tag implementation. Minimal implementation cost means adding tags requires negligible development resources whilst providing potential upside without meaningful downside risks.

Testing and validation confirms proper implementation across pagination sequences. Manual inspection viewing page source on representative paginated pages confirms tags appear with correct syntax. Screaming Frog and similar crawling tools extract rel="next" and rel="prev" tags across sites enabling systematic validation of implementation completeness. Google Search Console URL inspection tool shows whether Google detects tags on specific URLs though doesn't confirm whether Google uses detected tags. Pagination sequence mapping creates documentation of intended sequence structure enabling comparison against actual tag implementation identifying discrepancies requiring correction.

Canonical Tag Strategy for Paginated Content

Canonical tag implementation on paginated sequences requires strategic decisions balancing content consolidation against individual page indexing needs.

Self-referencing canonicals treat each paginated page as unique content worthy of individual indexing. Implementation includes page 1 canonical pointing to itself <link rel="canonical" href="https://example.com.au/category">, page 2 canonical pointing to itself <link rel="canonical" href="https://example.com.au/category?page=2">, and subsequent pages following same pattern with each page declaring itself canonical. This approach allows search engines to index and rank individual pagination pages independently, distributes ranking opportunities across sequence enabling page 2 or 3 to rank for specific queries, preserves all content accessibility for search engine indexing rather than hiding paginated content, and avoids content consolidation that view-all alternatives provide whilst maintaining pagination user experience.

Self-referencing canonical advantages include complete content indexing without search engine confusion about preferred URLs, flexibility for different paginated pages to rank for distinct keyword variations, avoidance of content hiding that consolidation approaches can create, and straightforward implementation requiring minimal decision-making about consolidation logic. Australian e-commerce businesses with unique products or content on each paginated page benefit from self-referencing approach enabling page 5 with specific products to rank for queries directly relevant to those products rather than forcing all traffic to page 1 containing different products.

Consolidation to page 1 represents alternative approach where all paginated pages canonicalise to first page in sequence. Implementation includes page 1 self-referencing canonical, page 2 canonical pointing to page 1 <link rel="canonical" href="https://example.com.au/category">, and all subsequent pages pointing to page 1 consolidating all ranking signals. This approach concentrates link equity and authority to single page 1 URL, prevents pagination pages from competing against each other in search results, simplifies user-facing SEO by promoting single URL per category or content type, and reduces indexed page counts potentially improving crawl efficiency.

Consolidation to page 1 disadvantages include hiding paginated content from indexing preventing search engines from ranking pages 2+ independently, forcing users arriving from search to pagination-less page 1 then navigate to find content that appeared in search snippets they clicked, creating duplicate content between paginated pages and page 1 despite canonical directive which can confuse search engines, and oversimplifying content relationships when paginated pages contain unique valuable content warranting independent indexing. Australian content publishers with archive pages where page 20 contains genuinely different content than page 1 should avoid consolidation approach that hides historical content from indexing.

View-all page canonicalisation offers third approach where paginated versions exist for user experience whilst complete view-all versions receive canonical preference. Implementation includes view-all page at /category/view-all containing complete unpaginated content list, paginated page 1 through N all canonical to view-all version, and view-all page self-referencing its own URL as canonical. This approach provides search engine single comprehensive URL containing all content, maintains paginated navigation for users preferring page-by-page browsing, consolidates ranking signals to single strong view-all URL, and offers clear separation between user experience pagination and search engine optimisation preferences.

View-all implementation challenges create practical limitations despite theoretical elegance. Performance problems emerge when view-all pages attempt rendering thousands of products or items creating slow page loads that harm Core Web Vitals and user experience. Server resource consumption increases substantially when view-all pages require generating large content sets from databases. Mobile experience suffers particularly badly from view-all pages with excessive content overwhelming mobile viewport and consuming mobile data. Maintenance complexity increases because three URL variations require management for each content sequence including paginated versions and view-all alternative. Australian businesses should implement view-all only for sequences with manageable content volumes typically under 500 items where complete rendering remains performant.

Strategic canonical decision framework guides implementation choices based on content characteristics. Use self-referencing canonicals when paginated pages contain substantially unique content warranting independent indexing, when sequences are relatively short with 5-10 pages maximum, when different pages target distinct keyword variations, and when crawl budget permits indexing all pagination pages without waste. Use page 1 consolidation when paginated pages contain minimal unique content with mostly identical headers, descriptions, and formatting, when preventing pagination competition for rankings is priority, when simplifying indexed URL counts is important, and when forcing page 1 as single entry point serves user experience goals. Use view-all consolidation when complete content lists can render performantly as single pages, when providing comprehensive single-URL content access benefits users and search engines, when maintaining separate paginated navigation improves specific user journey preferences, and when development resources support maintaining parallel pagination implementations.

Pagination Crawl Budget Management

Excessive pagination depth wastes search engine crawl budget requiring strategic limits and monitoring preventing search engines from discovering and crawling unnecessary pagination sequences.

Crawl budget impact quantifies how pagination affects search engine crawling resources. Each pagination page consumes crawl budget that could target unique content pages, with deep pagination sequences of 50+ pages consuming substantial resources. Search engines discovering pagination through internal links or sitemaps will attempt crawling complete sequences unless technical barriers prevent unnecessary depth. Australian e-commerce sites with limited crawl budget allocations relative to total URL counts face particularly severe impact when pagination waste prevents adequate product page crawling. Crawl efficiency analysis through server log files reveals actual search engine crawling patterns showing what percentage of crawl requests target pagination versus product, category, or content pages providing quantitative measurement of pagination's crawl budget impact.

Strategic pagination limits prevent infinite or excessive sequences through maximum page count implementation. Limiting pagination to 10-20 pages maximum for most content types ensures search engines don't waste resources on extremely deep pagination. Adjusting items-per-page counts to reduce total pagination depth shows 50 items per page requiring fewer pagination pages than 10 items per page for equivalent content volumes. Implementing pagination only where necessary avoiding pagination on content types or categories with few items not justifying sequence creation. Removing empty pagination pages when inventory or content reduces below levels requiring pagination depth that previously existed. Australian retailers with seasonal inventory fluctuations should dynamically adjust pagination depth as catalogue sizes change rather than maintaining fixed pagination structures regardless of content volume.

Noindex on deep pagination prevents search engine indexing of pagination beyond necessary thresholds whilst allowing crawling for link discovery. Implementation includes identifying appropriate noindex threshold typically page 10-15 where content value diminishes significantly, adding <meta name="robots" content="noindex,follow"> to pages beyond threshold preventing indexing whilst allowing link following, maintaining canonical and rel="next" tags even on noindexed pages providing consistent pagination signals, and monitoring Search Console confirming deep pagination successfully deindexes rather than continuing appearing in indexes. The follow directive paired with noindex enables search engines to discover links on deep pagination pages including links to products or content that deep pagination contains whilst preventing pagination pages themselves from indexing and consuming index capacity.

Robots.txt pagination blocking prevents crawling entirely for extreme pagination depths or specific parameter patterns creating crawl waste. Implementation includes blocking pagination parameters beyond reasonable limits like Disallow: /*?page=1[5-9] blocking pages 15-19 or Disallow: /*?page=[2-9][0-9] blocking pages 20-99, blocking sort parameter combinations creating pagination variations, and testing robots.txt thoroughly because overly aggressive blocking can accidentally prevent discovery of content that pagination pages link to. Robots.txt represents more aggressive intervention than noindex because blocked pages aren't crawled at all preventing even link discovery versus noindex allowing crawling whilst preventing indexing. Australian businesses should prefer noindex over robots.txt blocking in most cases reserving robots.txt for truly problematic pagination patterns creating excessive crawl waste.

XML sitemap pagination strategy controls which pagination pages receive sitemap promotion versus discovery only through crawling. Best practices include including only page 1 of each pagination sequence in sitemaps, excluding all pages 2+ from sitemap submission letting search engines discover them through rel="next" tags and internal links, using separate sitemap files for different content types enabling independent pagination strategies, and updating sitemaps promptly when content changes affect pagination depth ensuring sitemaps accurately represent current site structure. Sitemap inclusion signals importance to search engines making selective inclusion of page 1 only appropriate for directing search engines toward primary entry points whilst avoiding suggesting that page 15 of categories deserves equivalent crawling priority to page 1.

Parameter handling in Search Console provides Google-specific instructions about pagination parameter treatment. Configuration includes identifying pagination parameters like page, p, or offset, specifying that parameters narrow content by displaying subset of larger sets, providing example URLs demonstrating parameter behavior, and selecting "Let Googlebot decide" option allowing Google to determine optimal crawling based on site structure signals rather than forcing specific handling that might not suit Google's algorithms. Parameter handling supplements other pagination optimisations by giving Google explicit context about parameter purposes enabling more intelligent crawling decisions than would occur without explicit guidance.

Pagination User Experience and Mobile Considerations

Pagination serves user experience needs requiring balance between SEO optimisation and usability particularly for mobile-first indexing where mobile pagination implementation determines Google's understanding of paginated content.

Mobile pagination experience requires specific optimisation because Google primarily uses mobile page versions for indexing and ranking. Touch-friendly navigation includes adequately sized previous/next buttons and page number links meeting 48x48 pixel minimum tap target sizes preventing accidental clicks. Responsive pagination ensures page number links reformat appropriately for narrow mobile viewports without creating horizontal scrolling. Performance optimisation prioritises fast loading for mobile pagination pages because mobile networks provide slower connectivity than desktop WiFi connections. Visual clarity uses clear pagination controls that mobile users can understand quickly without confusion about navigation options. Accessibility compliance includes proper ARIA labels, keyboard navigation support, and screen reader compatibility ensuring pagination functions for users with disabilities accessing through mobile assistive technologies.

Infinite scroll versus pagination presents strategic choice with SEO implications favouring traditional pagination despite infinite scroll's user experience appeal. Infinite scroll advantages include seamless browsing without pagination clicks, mobile-friendly continuous scrolling matching native app experiences, and reduced navigation friction eliminating multi-click page number selection. Infinite scroll SEO disadvantages include content lacking distinct URLs preventing deep linking and sharing, difficulty implementing proper canonical signals without clear page boundaries, challenges for search engine content discovery when JavaScript rendering controls content loading, and inability for users to bookmark specific positions in content sequences. Hybrid implementations combining infinite scroll with pagination URLs provide compromise enabling user experience benefits whilst maintaining SEO-friendly URL structures, pagination fallbacks for users with JavaScript disabled ensuring accessibility, and clear content boundaries enabling proper canonical and rel tag implementation.

Load-more button implementations offer alternative to infinite scroll with slightly different user experience and SEO characteristics. Load-more requires user interaction triggering content loading providing clearer user control compared to automatic infinite scroll. Implementation should generate distinct URLs for each load-more action enabling bookmarking and sharing, include proper canonical tags on dynamically loaded content, ensure search engine accessibility to content regardless of JavaScript availability through server-side rendering or progressive enhancement, and provide clear indication of total content volume and current position preventing user uncertainty about remaining content. Australian e-commerce businesses should evaluate whether load-more or traditional pagination better serves their specific user demographics and technical capabilities.

Items-per-page optimisation balances user experience against SEO considerations affecting pagination depth and crawl efficiency. Higher items-per-page counts reduce total pagination pages minimising crawl budget impact whilst potentially overwhelming users with excessive choice. Lower counts create more pagination pages potentially improving engagement through manageable page lengths whilst increasing crawl budget consumption. User testing determines optimal counts for specific audiences and content types rather than assuming generic best practices apply universally. Dynamic adjustment allows users selecting preferred items-per-page satisfying different browsing preferences whilst complicating SEO through parameter variations requiring careful canonical and crawl budget management. Australian businesses should implement user research understanding whether customers prefer few high-density pages versus many focused pages rather than making arbitrary items-per-page decisions based solely on technical considerations.

Pagination accessibility ensures all users can navigate paginated sequences regardless of abilities or assistive technologies employed. Keyboard navigation enables previous/next and page number access without mouse dependency critical for motor impairment users. Screen reader compatibility includes proper heading structure, ARIA labels describing pagination controls, and meaningful link text beyond generic "next" or "previous" lacking context. Skip links allow bypassing repetitive pagination navigation reducing interaction burden for assistive technology users. Focus management ensures keyboard focus moves appropriately when pagination triggers page changes. Visual clarity uses adequate colour contrast, clear visual indicators for current page position, and understandable pagination controls. Australian websites must comply with Web Content Accessibility Guidelines (WCAG) making accessibility implementation legal requirement beyond user experience consideration.

Monitoring and Optimising Pagination Performance

Ongoing pagination monitoring ensures implementations continue functioning correctly as websites evolve and identifies optimisation opportunities improving pagination SEO effectiveness.

Google Search Console pagination monitoring tracks indexing status and crawling patterns for paginated content. Coverage reports show how many pagination URLs are indexed, excluded, or encountering errors. URL inspection confirms specific pagination pages are indexed correctly with proper canonical recognition. Performance reports reveal whether paginated pages generate impressions and clicks versus only page 1 receiving search traffic. Crawl stats indicate how much crawl budget search engines allocate to pagination versus other content types. Enhancement reports for structured data and other features confirm pagination implementation doesn't interfere with rich result eligibility. Australian businesses should review Search Console pagination data monthly identifying emerging problems before they significantly impact organic visibility.

Server log analysis provides detailed crawling behaviour insights that Search Console supplements but doesn't fully reveal. Log analysis identifies actual search engine crawler requests targeting pagination, quantifies crawl budget percentage consumed by pagination sequences, reveals whether search engines crawl deep pagination beyond intended limits suggesting inadequate crawl budget protection, exposes temporal crawling patterns showing when pagination crawling occurs, and compares crawl distribution across different pagination sequences identifying problematic categories or content types receiving disproportionate crawling attention. Regular log analysis monthly or quarterly depending on site size enables data-driven pagination optimisation decisions based on actual search engine behaviour rather than assumptions about crawling patterns.

Indexed page audits compare intended versus actual indexing status for paginated content ensuring search engines respect canonical directives and crawl budget controls. Audits include exporting complete indexed page lists from Search Console or site: searches, categorising URLs by pagination depth and content type, identifying pagination beyond intended index thresholds indicating noindex or robots.txt failures, discovering unexpected pagination parameter variations suggesting new technical problems, and quantifying indexing status changes over time revealing whether pagination optimisation efforts successfully reduced problematic indexing. Australian businesses should conduct comprehensive pagination indexing audits quarterly validating that pagination implementations function as intended rather than assuming initial implementations remain effective indefinitely without monitoring.

Ranking distribution analysis reveals whether pagination fragments rankings across multiple competing pages versus consolidating rankings to preferred URLs. Analysis includes identifying keywords where multiple pagination URLs rank simultaneously, calculating ranking position distribution showing whether page 1 ranks higher than pages 2+ as expected, measuring click distribution across pagination URLs determining whether traffic concentrates appropriately, comparing current ranking patterns against historical data revealing optimisation impact, and benchmarking against competitors seeing whether their pagination strategies achieve better ranking consolidation. Australian e-commerce businesses should specifically analyse category page rankings because pagination problems most commonly affect category pages with product pagination sequences.

Core Web Vitals impact assessment measures whether pagination implementation affects page experience signals that Google uses as ranking factors. Assessment includes measuring Largest Contentful Paint for pagination pages comparing against site-wide LCP performance, evaluating Cumulative Layout Shift specifically for pagination controls that load or shift during page rendering, testing Interaction to Next Paint for pagination button clicks and page number selection, comparing mobile versus desktop pagination performance because mobile-first indexing prioritises mobile experience, and implementing Real User Monitoring tracking actual user experience with pagination rather than only synthetic lab testing. Pagination implementations affecting Core Web Vitals negatively require optimisation prioritising performance improvements over feature complexity.

Pagination conversion impact analysis connects pagination user experience to business outcomes ensuring optimisation doesn't sacrifice revenue for technical correctness. Analysis includes measuring conversion rates by pagination depth revealing whether users reaching page 5+ convert differently than those remaining on page 1, calculating bounce rates for paginated pages identifying user experience problems causing abandonment, tracking engagement metrics including time on site and pages per session for pagination user journeys, testing alternative pagination implementations through A/B testing measuring business impact rather than only technical metrics, and surveying users about pagination experience gathering qualitative feedback supplementing quantitative analytics. Australian e-commerce businesses should resist pagination changes harming measurable conversion rates even if technically optimal for SEO because revenue ultimately validates marketing strategies.

Frequently Asked Questions

Should Australian e-commerce businesses implement rel="next" and rel="prev" tags after Google deprecated them in 2019?

Yes, implementing rel="next" and rel="prev" tags remains worthwhile despite Google's deprecation announcement for several compelling reasons. Google stated tags are deprecated but still function as hints helping search engines understand pagination relationships making implementation valuable despite not being required. Bing and other search engines continue officially supporting tags benefiting non-Google search visibility that represents approximately 5-10% of Australian search traffic. Implementation cost is minimal requiring basic HTML link elements in page head sections without complex technical requirements. Future-proofing protects against potential policy reversals where tags might regain official status. Documentation value helps SEO auditors and developers understand pagination structure through examining tag implementation. Partial evidence suggests Google still uses tags despite deprecation based on indexing behaviour patterns and continued tag detection in Search Console. Australian businesses should implement tags on paginated content as defensive optimisation providing potential upside without meaningful resource investment or downside risks that would justify excluding them despite deprecation.

What canonical tag strategy should Australian e-commerce category pages with product pagination implement?

Self-referencing canonical tags treating each paginated page as unique content represents optimal strategy for most Australian e-commerce category pagination. Implementation includes page 1 canonical pointing to itself, page 2 canonical pointing to itself, and subsequent pages following same pattern where each page declares itself canonical. This approach allows different paginated pages containing different products to rank independently for specific product-related queries, preserves all content accessibility for search engine indexing rather than hiding paginated products, avoids forcing all traffic to page 1 when users searching for products only appearing on page 5 would better experience direct access to that page, and maintains crawl efficiency when combined with noindex on extremely deep pagination preventing excessive crawling whilst preserving reasonable pagination depth indexing. Avoid consolidating all pagination to page 1 through canonical tags because this hides products on pages 2+ from indexing potentially preventing their discovery through organic search. Reserve view-all consolidation for categories with limited product counts where single-page rendering remains performant typically under 200-300 products maximum. Australian e-commerce businesses with thousands of products per category should definitely implement self-referencing canonicals enabling appropriate indexing depth without forcing unrealistic single-page consolidation.

How can Australian websites implement pagination whilst supporting infinite scroll for mobile user experience?

Hybrid implementations combining traditional pagination URLs with infinite scroll user interface provide optimal solution balancing SEO requirements against mobile user experience preferences. Technical implementation includes generating distinct URLs for each pagination segment like /category?page=2 providing bookmarkable SEO-friendly URLs, implementing infinite scroll through JavaScript that updates browser URL using History API as users scroll triggering content loading, ensuring server-side rendering or progressive enhancement delivers content accessibility without requiring JavaScript execution, maintaining proper canonical tags, rel="next", and rel="prev" implementation on each pagination URL, and providing pagination fallback navigation for users with JavaScript disabled or infinite scroll failures. This approach delivers seamless mobile scrolling experience that users expect whilst maintaining distinct URLs enabling search engine indexing, sharing, and bookmarking that pure infinite scroll lacks. Australian e-commerce businesses can observe user preference through analytics measuring engagement with pagination navigation versus scroll behaviour optimising balance between pagination and infinite scroll based on actual user interaction patterns rather than assumptions about preferred experiences.

What maximum pagination depth should Australian websites implement before using noindex or robots.txt blocking?

Optimal maximum pagination depth varies by content type, but general guidelines suggest noindex implementation around page 10-15 for most Australian e-commerce categories. Consider content value diminishing substantially beyond initial pages because users rarely navigate deeply into pagination sequences making pages 20+ low-value from both user and search engine perspectives. Assess crawl budget efficiency through log analysis identifying whether search engine crawling extends beyond reasonable depths consuming resources better allocated to product pages. Evaluate inventory distribution determining whether meaningful products exist on deep pagination warranting indexing or whether deep pages contain only long-tail low-demand items not justifying index inclusion. Implement noindex rather than complete robots.txt blocking for deep pagination because noindex allows crawling and link discovery whilst preventing indexing whereas robots.txt prevents crawling potentially hiding products that deep pagination contains. Use robots.txt only for extreme depths like page 50+ where crawling serves no purpose and wastes substantial crawl budget. Australian businesses should analyse specific pagination usage patterns through analytics before arbitrarily setting maximum depths because appropriate thresholds depend on category size, product distribution, and user navigation behaviour that generic recommendations cannot accommodate.

How should Australian news publishers handle pagination for article archives and date-based content?

News publishers should implement self-referencing canonical tags on archive pagination treating each archive page as unique time-period content worthy of independent indexing combined with strategic noindex on extremely old archives providing minimal current value. Archive page 1 showing most recent articles deserves prominent indexing and regular recrawling because current news content provides high value. Archive pages 2-5 containing recent but not current articles warrant indexing because they provide useful historical context and might contain articles relevant to ongoing news cycles. Deep archive pagination beyond page 10-15 or content older than 6-12 months depending on news cycle should receive noindex preventing crawl budget waste on historical content rarely accessed or updated. Implement date-based URLs like /news/2024/02/ providing clear temporal organisation that self-referencing canonicals treat as distinct content periods. Consider implementing archive pruning or consolidation for extremely old content combining historical articles into topic-based resources rather than maintaining extensive date-based pagination that crawling resources cannot efficiently support. Australian news publishers should particularly evaluate mobile pagination experience because majority of news consumption occurs on mobile devices requiring pagination optimised for mobile-first indexing and mobile user interaction patterns.

What should Australian businesses do if paginated pages compete against each other in search results?

Systematic investigation and technical correction resolves pagination competition in search results through several complementary approaches. First analyse exact ranking patterns identifying which paginated pages compete for which keywords, whether competition involves adjacent pages like 1 and 2 or distant pages like 1 and 15, and whether competition is recent development or long-standing problem. Second review canonical implementation confirming whether self-referencing canonicals exist properly without accidental consolidation or chains, whether canonical targets are correct without typos or resolution errors, and whether Search Console shows Google respects declared canonicals versus selecting different pages. Third implement or verify rel="next" and rel="prev" tags establishing clear pagination relationships that help search engines understand content structure despite tag deprecation. Fourth consider noindex on deep pagination if competition involves distant pages like page 1 competing with page 20 suggesting excessive pagination indexing. Fifth evaluate whether consolidation to page 1 or view-all alternative better serves business needs if competition indicates pagination fragmentation rather than appropriate distribution. Sixth strengthen page 1 through internal linking, content optimisation, and technical improvements making it clearly preferred result versus paginated alternatives. Australian businesses should resist immediately consolidating all pagination to page 1 because competition might indicate appropriate indexing enabling different pages to rank for distinct keyword variations rather than problematic fragmentation requiring consolidation.

How frequently should Australian websites audit pagination SEO implementation and monitoring?

Monthly pagination monitoring through Google Search Console combined with quarterly comprehensive pagination audits provides appropriate cadence for most Australian businesses. Monthly monitoring includes reviewing indexed page counts tracking whether pagination URLs increase unexpectedly indicating new indexing problems, checking crawl stats for pagination-related changes showing altered search engine crawling patterns, analysing performance reports confirming pagination pages generating appropriate impressions and clicks, and monitoring coverage reports identifying new pagination errors or warnings requiring investigation. Quarterly comprehensive audits include complete indexed URL exports categorising by pagination depth and content type, server log analysis quantifying actual crawler behavior towards pagination, ranking distribution analysis measuring whether pagination competes inappropriately in search results, canonical implementation validation confirming proper tag presence and accuracy, and competitive benchmarking comparing pagination strategy against competitors. Increase monitoring frequency when implementing pagination changes, after major site updates affecting pagination templates, during significant traffic changes that might indicate pagination problems, and when Search Console shows increasing pagination errors or warnings requiring immediate attention. Australian e-commerce businesses with frequently changing inventory or regularly launched new categories should specifically monitor new pagination sequences ensuring proper implementation rather than assuming existing pagination templates extend correctly to new content sections.

Strategic Pagination Balances Usability and SEO

Pagination SEO strategy transforms the fundamental tension between user experience requiring manageable sequential content presentation and search engine efficiency requiring content consolidation and crawl budget protection into balanced implementation serving both audiences through proper technical signals and strategic implementation decisions.

The frameworks outlined in this guide including rel="next" and rel="prev" tag implementation despite deprecation, canonical tag strategic decision-making between self-referencing and consolidation approaches, crawl budget protection through pagination limits and noindex implementation, and comprehensive monitoring ensuring ongoing effectiveness provide foundation for Australian businesses to implement pagination serving user navigation needs whilst optimising search engine crawling and indexing efficiency.

Australian businesses working with Maven Marketing Co. benefit from professional pagination audits identifying current implementation problems and optimisation opportunities, strategic guidance determining optimal pagination approaches for specific content types and business contexts, technical implementation ensuring proper tag usage and crawl budget protection, and ongoing monitoring maintaining pagination effectiveness as websites evolve and search engine algorithms update.

Ready to optimise pagination strategy ensuring search engines efficiently index paginated content whilst maintaining excellent user experience? Maven Marketing Co. provides comprehensive technical SEO services including pagination audits, implementation recommendations, crawl budget optimisation, and ongoing monitoring ensuring your paginated content sequences achieve optimal search visibility without wasting crawling resources or creating duplicate content problems that improper pagination inevitably produces.