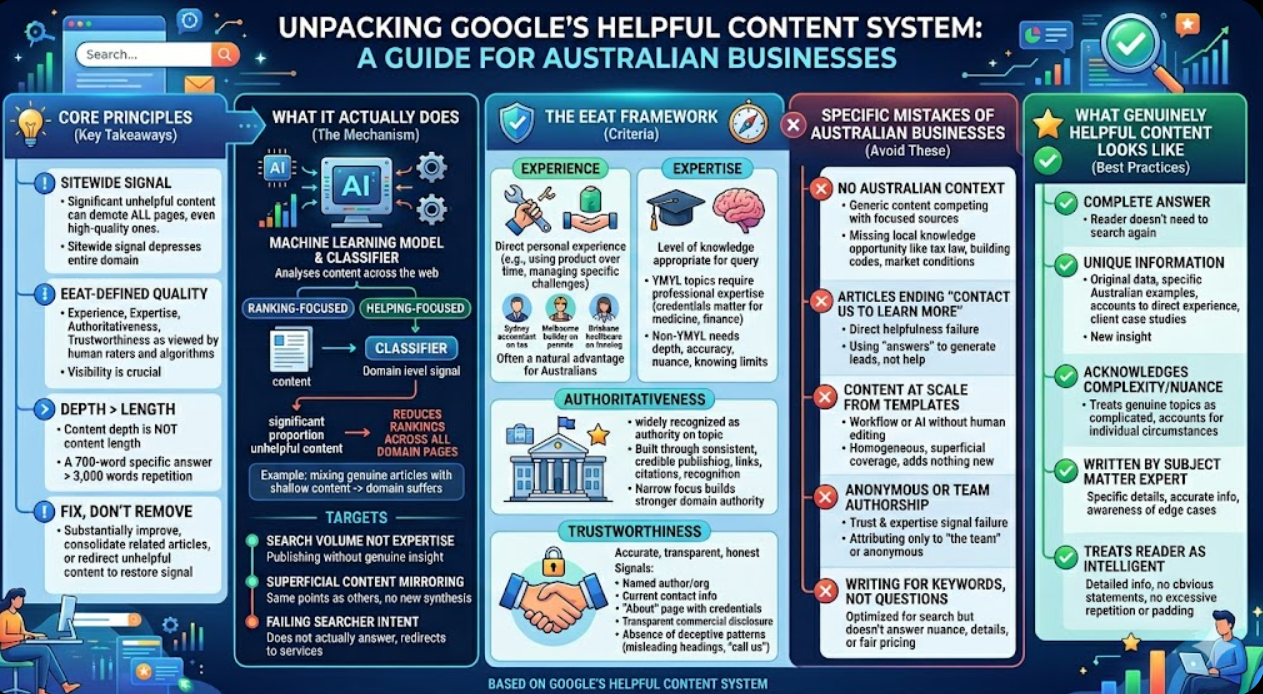

Key Takeaways

- Google's Helpful Content system applies a sitewide quality signal, not a signal applied at the page level. A site with a significant proportion of unhelpful content may see all of its pages demoted, including pages that would be assessed individually as high quality, because the sitewide signal depresses the entire domain.

- "Helpful" in Google's assessment means content that satisfies the searcher's actual need without requiring them to visit additional sources to complete their understanding. Content that partially answers a question and then invites the reader to contact the business for the rest of the answer is not helpful in this sense.

- Google's quality rater guidelines define helpfulness through the EEAT framework: Experience, Expertise, Authoritativeness, and Trustworthiness. Content that does not demonstrate at least some of these qualities in ways that are visible to both human raters and algorithmic signals is assessed as low quality regardless of its length or topic coverage.

- The strongest predictor of content being assessed as unhelpful is that it was produced primarily to capture a keyword rather than to genuinely serve a person with a specific need. Google's signals for this include thin coverage of the topic, absence of original perspective or specific detail, and content that closely mirrors what is already available from multiple other sources.

- Australian businesses can improve their helpfulness signals by demonstrating direct, personal experience with the topics they write about, including specific Australian context that generic content from overseas markets does not have, and by providing answers that are complete enough that the reader does not need to search again.

- Content depth is not the same as content length. A 3,000-word article that repeats the same general points with slight variation is not more helpful than a 700-word article that directly and completely answers the specific question the searcher was asking.

- Unhelpful content does not need to be removed to restore the helpfulness signal. It can be substantially improved, consolidated with related articles that together cover the topic properly, or redirected to a more comprehensive piece. The goal is to reduce the proportion of content on the domain that Google assesses as unhelpful.

What Google's Helpful Content System Actually Does

Google's Helpful Content system is a model trained on machine learning that analyses content across the web and applies a classifier that distinguishes content written primarily for search engine ranking from content written primarily to help a person. This classifier produces a signal that applies at the domain level, not the page level: if a significant proportion of a domain's content is assessed as unhelpful, the signal reduces rankings across all pages on that domain.

This is why some Australian businesses that publish a mix of genuinely useful articles and large volumes of shallow content produced to capture keywords find that even their best articles underperform. The sitewide signal is weighted against the domain's overall content quality, not assessed article by article.

Google's guidance on what the system is designed to catch is specific. The Helpful Content system targets:

Content that is produced primarily because a keyword has search volume, not because the publisher has genuine expertise or experience on the topic. A business that publishes articles on every keyword in their industry regardless of whether they have any particular insight or experience with the specific topic is producing the kind of content this system is designed to find.

Content that covers topics in the same superficial way they are covered on every other result for the same query. If an article's main points could have been extracted from reading the top three results for the same keyword and synthesising them without adding anything new, it is not the kind of content the system rewards.

Content that fails to satisfy the searcher's actual intent. An article that appears to address a specific question but does not actually answer it, instead providing general background and redirecting the reader to the business's services, is not satisfying the search intent that brought the reader to the page.

The EEAT Framework: What Google's Quality Raters Actually Look For

Google's quality rater guidelines, the manual that human reviewers use to assess page quality for Google's internal evaluation processes, describe quality through the EEAT framework: Experience, Expertise, Authoritativeness, and Trustworthiness. These four signals are assessed together, and the weight given to each varies by topic type.

Experience is the signal introduced most recently to the framework. It refers to whether the content demonstrates that the author has direct, personal experience with the topic they are writing about. A product review that describes the reviewer's actual use of the product over time demonstrates experience. A review that synthesises the specifications from the manufacturer's website and the features listed in other reviews does not. An article about managing a specific type of business challenge that contains specific observations from direct experience demonstrates experience. An article that restates general management principles without any evidence of having actually navigated the challenge does not.

For Australian businesses, demonstrating experience is often a natural advantage over generic overseas content providers: a Sydney accounting firm writing about Australian tax obligations, a Melbourne builder writing about Victorian building permit requirements, a Brisbane healthcare provider writing about Queensland health funding programmes. These businesses have direct experience with the specific Australian context that generic content producers do not, and making that experience visible in the content is one of the strongest helpfulness signals available.

Expertise refers to whether the content demonstrates knowledge of the topic at a level appropriate to the query. Some topics, particularly those in what Google's guidelines call "YMYL" categories (Your Money or Your Life — topics that affect health, financial decisions, safety, and wellbeing), require the content to demonstrate professional expertise rather than just general knowledge. A page about medication interactions needs to demonstrate medical expertise. A page about superannuation advice needs to demonstrate financial expertise. For these topics, the author's credentials and qualifications are part of the expertise signal.

For topics outside the YMYL category, demonstrated expertise is assessed through the depth and accuracy of the content: does it address the nuances of the topic that only someone with genuine knowledge would know? Does it provide specific, accurate information rather than generic statements? Does it acknowledge the limits of what can be confidently stated and the cases where individual circumstances vary?

Authoritativeness is assessed at both the page and domain level. A page from a domain that is widely recognised as an authority in a topic area starts with a stronger authoritativeness signal than the same content from an unknown domain. Authoritativeness is built through consistent, credible publishing in a specific topic area over time, through links and citations from other authoritative sources in the same area, and through recognition from the institutions and publications that the target audience trusts.

For Australian businesses, authoritativeness in a specific topic area often comes from narrowing the focus rather than broadening it. A business that consistently publishes specific, authoritative content on a narrow topic area builds stronger domain authority for that area than one that publishes broadly across many tangentially related topics.

Trustworthiness is the most foundational of the four signals. It refers to whether the content and the site that hosts it can be trusted to be accurate, transparent, and honest. Trustworthiness signals include: a clearly identified author or organisation, contact information that is current and functional, an About page that describes the organisation and its credentials, transparent disclosure of commercial interests where relevant, accurate and current information, and an absence of deceptive patterns (misleading headings, content that promises to answer a question but does not, hidden commercial intent).

The Specific Things Australian Businesses Get Wrong

With the framework established, the specific content decisions that most commonly undermine helpfulness scores for Australian businesses are:

Publishing on topics without specific Australian context. Generic articles about marketing, finance, or business management that could have been written by anyone in any country where English is the primary language are competing against content that has been produced with more focus, more specificity, and more genuine authority by the organisations that have made those topics their core publishing focus. Australian businesses have a natural advantage in topics that are specifically Australian: Australian tax law, Australian consumer law, Australian building codes, Australian healthcare, Australian market conditions. Failing to leverage this specific local knowledge is a missed opportunity for both helpfulness and differentiation.

Writing articles that end with "contact us to learn more." This pattern is so common in Australian business content that it barely registers as a problem, but it is a direct helpfulness failure. If the article's answer to the reader's question is "call us," the article is not actually answering the question. It is using the appearance of answering to generate a lead. Google's system is designed to identify this pattern and the content that exhibits it consistently is assessed as unhelpful regardless of how well it is written.

Producing content at scale from templates. Template-based content production, whether through internal workflows or AI generation tools used without substantial human editing and supplementation, produces the homogeneous, superficial coverage pattern that the Helpful Content system targets. The content looks like it covers the topic, but it does not add anything to what is already available for the same query across multiple other sources.

Attributing content to "the team" or publishing without a named author. Authorship anonymity is a trust and expertise signal failure. Content attributed to "The Maven Marketing Team" or published without any author attribution is harder for Google's quality assessment to assign expertise and experience signals to. Named authors with visible credentials and a consistent publication history produce stronger EEAT signals than content attributed only to a team or published anonymously.

Writing for the keyword rather than the question. An article optimised around the keyword "commercial cleaning services Melbourne" that never actually answers why a business should choose commercial cleaning over general cleaning, what the frequency requirements are for different business types, what standards commercial cleaning should meet, and what a fair price looks like is not helpful to someone searching for commercial cleaning information. It is a page that exists to rank for a keyword.

What Genuinely Helpful Content Looks Like

Genuinely helpful content is identifiable by a specific set of characteristics that are assessable both by human quality raters and by Google's algorithmic signals:

It answers the actual question completely. A reader who has finished the article knows what they came to find out. They do not need to search again for a more complete answer or visit another source to fill in what the article left out.

It includes information that is not available in results currently ranked above the site for the same query. Original data, specific Australian examples, accounts from direct experience, case studies from real clients (appropriately anonymised), or analysis that synthesises information in a way that produces a new insight rather than restating existing information.

It acknowledges complexity and nuance rather than oversimplifying. Topics that are genuinely complicated are treated as complicated rather than reduced to a simple list that misrepresents the reality. Where individual circumstances vary, the content says so rather than implying a single answer applies to everyone.

It is written by someone who clearly knows the topic. The specific details, the appropriate caveats, the awareness of edge cases, and the accuracy of the information are consistent with genuine expertise rather than research that only scratches the surface.

It treats the reader as an intelligent person capable of using detailed information. It does not pad the word count with obvious statements, excessive repetition, or paragraphs of hedging that add length without adding information.

FAQs

How can an Australian business audit its existing content library for helpfulness?The most practical audit approach combines a quantitative and qualitative review. The quantitative layer uses Google Search Console performance data to identify pages with declining impressions and positions over the past 12 months, Google Analytics 4 data to identify pages with very high bounce rates and very short session durations (indicating the content is not serving visitors' needs), and a crawl tool such as Screaming Frog to identify very thin pages (under 400 words) that may not have enough content to meaningfully address any topic. The qualitative layer takes the pages identified as lowest performing in the quantitative review and assesses each one against Google's own published assessment questions, which are published in the Helpful Content guidance: Does the content provide original information, reporting, research, or analysis? Does it provide a substantial, complete, or comprehensive description of the topic? Does it avoid simply recapping what others have said without adding value? Would a person find this useful? An honest audit using these criteria will identify the specific pages that are most likely contributing to the helpfulness signal problem across the site.

Does using AI to generate content automatically make it unhelpful in Google's assessment?No. Google's guidance is explicit that the use of AI in content production is not itself a quality signal. What matters is whether the content is helpful, regardless of how it was produced. AI-generated content that has been substantially reviewed, edited, supplemented with specific expertise, original examples, and accurate Australian context, and that genuinely serves the reader's need is not penalised for being AI-assisted. AI-generated content that is published without meaningful human intervention, that produces the same superficial coverage available from multiple other sources, and that lacks any specific expertise or experience signal is unhelpful regardless of whether a human or an AI produced it. The practical implication for Australian businesses is that AI tools used as a starting point for content that is then substantively edited and enriched by people with genuine expertise on the topic are not a helpfulness risk. AI tools used to produce and publish content at scale without meaningful human review and enrichment are a significant helpfulness risk.

How long does it take for helpfulness improvements to produce measurable ranking recovery?Google's guidance is that the Helpful Content system updates periodically rather than continuously, meaning that improvements to content quality may not be immediately reflected in rankings. The timeline for measurable recovery after a genuine content quality improvement programme is typically three to six months, with some recoveries taking longer depending on the scale of the original problem and the depth of the improvements made. Businesses that make superficial changes (adding a few hundred words to existing thin articles without improving their actual quality or completeness) generally do not see meaningful recovery because the improvements are not sufficient to change the system's assessment of those pages. Businesses that make substantive improvements, removing content that cannot be salvaged, significantly improving content that can be, and publishing content of genuine expert quality going forward, typically see the strongest and most durable recovery signals.

Helpful Is Not a Feeling. It Is a Measurable Standard.

Every business that publishes content believes it is being helpful. The important question is whether the specific signals that Google's Helpful Content system measures are present in the content: original perspective from genuine expertise and experience, complete answers that do not redirect the reader to additional sources, context that is specific to Australia that distinguishes the content from its generic equivalents, named authorship with visible credentials, and a content library at the domain level that does not carry a significant proportion of shallow pages produced to rank for keywords that dilute the overall helpfulness signal. Feeling like the content is helpful is not the standard. Meeting it is.

Maven Marketing Co develops and audits content strategies for Australian businesses against Google's Helpful Content criteria, identifying the specific gaps in the existing content library and building the editorial programme that produces a genuinely stronger helpfulness signal over time.

Talk to the team at Maven Marketing Co →