Key Takeaways

- Manual page-by-page schema testing becomes impractical at enterprise scale requiring automated validation tools that process thousands of URLs identifying errors across complete websites rather than limited samples

- Template-level schema validation catches systematic errors affecting hundreds or thousands of pages simultaneously whilst individual page testing misses patterns affecting specific category types, product attributes, or content variations

- Google Search Console enhancement reports provide field data showing which structured data actually generates rich snippets in search results versus validation tools showing only technical correctness without confirming search feature eligibility

- Schema markup errors often emerge after implementation through CMS updates, template modifications, dynamic content changes, and third-party integration updates requiring ongoing monitoring rather than one-time validation

- Different schema types require different validation priorities with Product schema demanding price and availability accuracy, LocalBusiness requiring complete NAP consistency, and Article schema needing proper date formats affecting news eligibility

.avif)

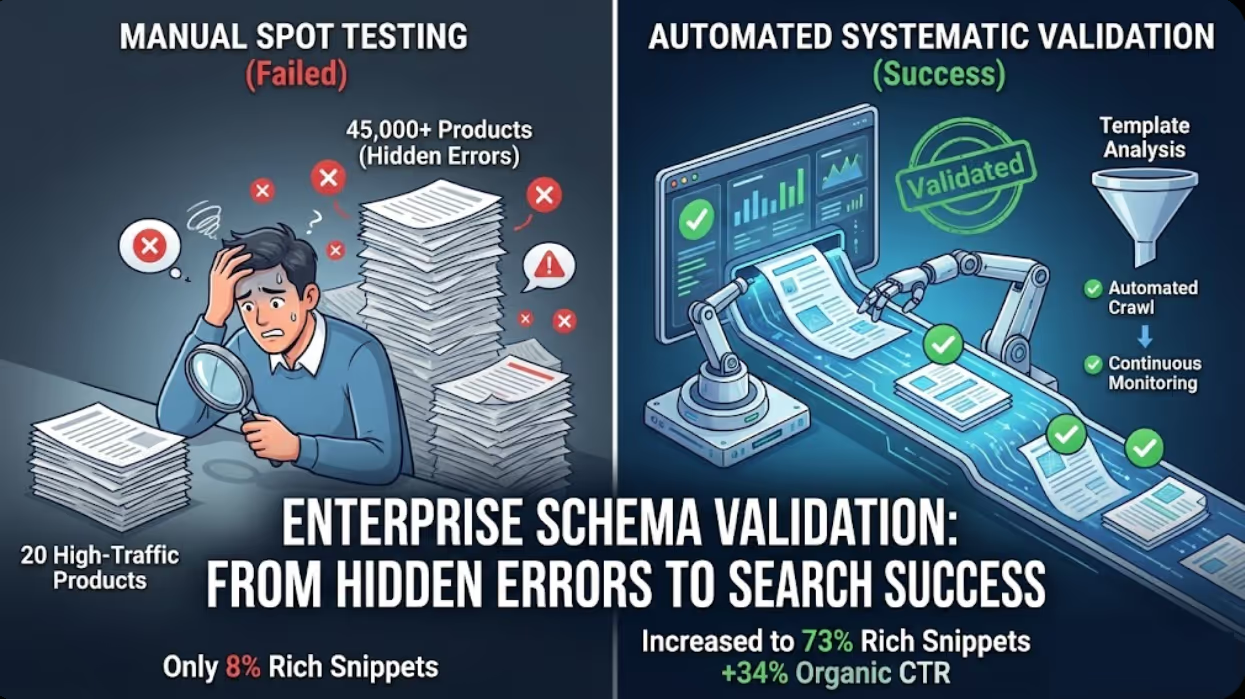

An Australian e-commerce retailer with 45,000 product pages implemented Product schema markup across their catalog expecting to generate rich product snippets showing prices, availability, and ratings in search results. Initial testing through Google's Rich Results Test validated markup on their 20 highest-traffic products appearing syntactically correct and eligible for rich results. The development team declared implementation successful and moved to other priorities.

Three months later, organic traffic analysis revealed that only 8% of product pages were actually generating rich snippets in search results despite schema being present on all pages. Investigation through comprehensive structured data audit discovered multiple problems that spot testing had completely missed. Template variations for products with multiple size options contained schema errors where availability status defaulted to incorrect values. Product categories using specific attribute combinations triggered JavaScript errors preventing schema rendering. Discontinued products retained "InStock" availability declarations whilst displaying "Out of Stock" to users creating content-markup mismatches that Google penalized. Price formatting inconsistencies across product categories including some using AUD currency codes whilst others omitted currency entirely invalidated schema on thousands of pages.

According to research from Screaming Frog, the average e-commerce website has schema markup errors affecting 23% of pages with markup, demonstrating that implementation alone doesn't guarantee validity requiring systematic testing revealing problems that surface-level checks miss.

Maven Marketing Co. implemented comprehensive structured data testing including automated crawling of complete product catalog validating schema on every page, template-specific validation identifying systematic errors affecting product variations, field data analysis through Search Console comparing markup presence against actual rich snippet appearances, and continuous monitoring detecting new errors emerging from catalog updates. Testing identified 47 distinct schema error patterns affecting different product categories and attributes that spot testing would never have discovered.

Systematic error correction across all 47 patterns increased rich snippet generation from 8% to 73% of product pages within eight weeks. Organic click-through rates for products with restored rich snippets increased 34% compared to products without schema whilst conversion rates improved 12% as accurate pricing and availability information in search results qualified traffic more effectively. The comprehensive testing investment of approximately 40 hours identified and resolved problems that thousands of hours of manual page-by-page validation could never have efficiently addressed.

Understanding Structured Data Validation Requirements

Effective schema testing requires understanding what validation actually confirms, what problems different validation approaches detect, and what issues remain invisible to common testing methodologies.

Syntax validation versus semantic correctness represents the fundamental distinction between technical markup validity and meaningful content accuracy. Syntax validation confirms that JSON-LD, Microdata, or RDFa markup follows proper formatting rules, uses correct property names from schema.org vocabulary, maintains proper nesting structures, and includes required properties that schema specifications mandate. Tools including Google's Rich Results Test, Schema Markup Validator, and various third-party validators perform syntax checking identifying formatting errors, invalid property names, and missing required fields. However, syntax validation cannot confirm semantic correctness including whether product prices actually match displayed prices, whether availability status reflects actual inventory, whether business hours in LocalBusiness schema match what customers encounter visiting the location, or whether author names in Article schema represent real people rather than placeholder text. Australian businesses achieving perfect syntax validation scores whilst providing inaccurate semantic data create markup that technically validates but provides search engines misleading information potentially resulting in rich snippet removal despite technical correctness.

Rich snippet eligibility versus actual appearance distinguishes between schema markup qualifying for rich results based on Google's guidelines and rich snippets actually appearing in search results based on Google's dynamic decision-making. Rich Results Test confirming eligibility means markup meets technical requirements and could generate rich snippets if Google chooses to display them. However, Google doesn't guarantee rich snippet display even for eligible markup instead using various quality signals, competitive factors, and query context determining whether rich results appear for specific searches. Australian businesses should validate both technical eligibility through validation tools and actual rich snippet generation through Search Console enhancement reports and manual SERP monitoring because substantial gaps between eligibility and appearance suggest quality issues, competitive disadvantages, or implementation problems that eligibility testing alone doesn't reveal.

Server-side versus client-side rendering validation affects whether validation tools accurately assess markup that JavaScript generates dynamically. Server-side rendered schema markup appears in initial HTML source enabling simple validation tools to detect it reliably. Client-side rendered markup injected by JavaScript after page load requires validation tools supporting JavaScript rendering to accurately assess. Many automated validation tools process only initial HTML missing JavaScript-generated schema entirely or evaluating incorrect versions before client-side modifications complete. Australian businesses using JavaScript frameworks including React, Vue, or Angular for dynamic schema generation should verify that validation tools actually render JavaScript rather than assuming tools detect all markup present on fully loaded pages that users and search engines encounter.

Template-level versus page-level validation priorities distinguish between testing individual pages and testing template patterns affecting multiple pages. Page-level validation examines specific URLs confirming correct implementation for particular products, articles, or locations tested. Template-level validation analyses patterns across page types identifying systematic errors affecting product categories, article formats, or location variations sharing common templates. A product schema error in a template file might affect 15,000 products whilst testing only high-traffic products misses the pattern entirely if those specific products happen to work correctly. Australian businesses should prioritise template-level validation identifying patterns affecting multiple pages over exclusively testing individual high-value pages that might not represent complete implementation quality.

Required versus recommended properties in schema specifications affect validation strictness and rich snippet eligibility. Required properties must be present for markup to validate at all with their absence causing validation failures. Recommended properties enhance markup without being mandatory for basic validation whilst influencing rich snippet likelihood and search feature eligibility. Google often requires properties beyond schema.org's minimum specifications for rich result eligibility creating gaps between passing generic schema validation and qualifying for Google's specific search features. Australian businesses should validate against Google's specific requirements documented in their structured data guidelines rather than only generic schema.org specifications because Google's requirements frequently exceed baseline schema specifications determining actual search visibility impact.

Validation Tools and Methodologies

Different validation approaches serve complementary purposes requiring combined use for comprehensive structured data quality assurance rather than relying on single tool or methodology.

Google Rich Results Test provides authoritative validation for rich snippet eligibility because Google determines search result display making their tool the definitive source for whether markup qualifies. Rich Results Test validates individual URLs entered manually or through API calls, renders JavaScript-generated markup accurately reflecting what Googlebot sees, identifies specific errors preventing rich result eligibility with guidance for resolution, shows preview of how rich results would appear in search, and validates against Google's specific requirements beyond generic schema.org specifications. Limitations include processing only single URLs rather than bulk validation, rate limiting preventing validation of thousands of pages efficiently, and focusing exclusively on rich snippet eligibility without comprehensive schema accuracy checking. Australian businesses should use Rich Results Test for final validation confirming critical pages qualify for rich snippets whilst supplementing with other tools for scale validation and comprehensive error detection.

Google Search Console enhancement reports provide field data showing actual rich snippet performance across complete websites rather than validation tool predictions. Enhancement reports show which pages successfully generated rich snippets in search results during recent periods, identify pages with valid markup that didn't generate rich results revealing quality or competitive issues, display error and warning counts across page types enabling pattern identification, and track trends showing whether implementation quality improves or degrades over time. Search Console represents the most important validation data source because it reflects actual search result behavior rather than theoretical eligibility, though delayed reporting means problems appear days or weeks after implementation changes requiring proactive validation preventing problems before Search Console detects them.

Screaming Frog structured data extraction enables bulk validation across thousands of URLs identifying patterns that manual testing cannot efficiently address. Screaming Frog crawls complete websites or specified sections, extracts all schema markup regardless of implementation method, validates syntax across crawled pages identifying errors and warnings, exports structured data for external analysis and comparison, and enables filtering by schema type, error pattern, or page category facilitating targeted problem identification. Configuration includes setting crawl depth and URL limits, enabling JavaScript rendering for client-side markup detection, configuring validation rules and strictness levels, and exporting data in formats enabling further analysis. Australian businesses with large websites benefit from Screaming Frog's ability to validate complete implementations revealing errors affecting specific categories, templates, or content variations that random sampling misses entirely.

Schema Markup Validator provides detailed syntax checking against schema.org specifications identifying technical errors in JSON-LD, Microdata, and RDFa implementations. The validator parses markup extracting structured data into human-readable format, validates against schema.org specifications checking property names, required fields, and data types, provides detailed error messages explaining specific problems, and enables testing of raw markup without requiring live URLs facilitating development testing before deployment. Schema Markup Validator complements Google's tools by validating comprehensive syntax beyond only rich snippet requirements catching errors that might not immediately affect rich results but indicate implementation quality problems requiring resolution.

Custom validation scripts enable businesses with specific requirements to build tailored testing matching their unique implementation patterns and quality standards. Custom scripts typically written in Python, JavaScript, or other languages can crawl websites extracting schema markup, validate against custom rules beyond standard specifications, check semantic accuracy comparing markup against page content, integrate with CMS or product databases validating markup reflects current data, and automate validation as part of deployment pipelines preventing invalid markup reaching production. Australian businesses with development resources benefit from custom validation enabling checks that generic tools don't support including brand-specific requirements, industry compliance validation, and business rule enforcement that off-the-shelf tools cannot implement.

Merkle Schema Markup Generator and Validator provides user-friendly schema creation with built-in validation checking markup as it's generated. The tool generates common schema types through guided interfaces, validates generated markup against specifications, provides copy-paste JSON-LD output for implementation, and offers educational context explaining property purposes and requirements. Merkle's tool suits Australian businesses without technical expertise needing to create and validate schema markup without writing code manually, though it doesn't provide scale validation across existing implementations requiring combination with crawling tools for comprehensive website auditing.

.avif)

Systematic Validation Workflow for Enterprise Implementations

Structured approach ensures comprehensive validation across large websites identifying all error categories without requiring manual testing of every individual page.

Initial crawl and inventory establishes baseline understanding of schema implementation scope before validation begins. Crawl process includes scanning complete website or defined sections with schema markup, identifying which pages contain structured data and which schema types are implemented, counting total pages by schema type enabling validation sampling strategies, documenting implementation methods including server-side versus client-side rendering, and establishing baseline for comparison after error correction measuring improvement. Initial inventory reveals implementation patterns including which templates contain schema, whether markup is consistently present across expected pages, and whether unexpected schema types appear requiring investigation. Australian businesses should complete comprehensive inventory before detailed validation preventing wasted effort validating pages that don't implement schema or missing entire page categories that inventory would reveal.

Schema type segmentation organises validation by markup type enabling type-specific validation rules and error prioritisation. Segmentation includes grouping Product schema pages separately from Article, LocalBusiness, Event, and other types, analysing each type independently because validation requirements differ substantially, prioritising validation based on search feature importance and traffic value, and documenting which schema types are business-critical versus experimental implementations requiring different validation rigor. Product schema for e-commerce businesses demands extremely high validation standards because product rich results directly affect revenue whilst experimental schema types warrant less intensive validation during pilot phases. Australian businesses should focus validation effort proportional to business impact rather than treating all schema types equally regardless of their commercial significance.

Template-based sampling strategy validates representative pages from each template variation rather than requiring validation of every individual page. Sampling includes identifying distinct templates within each schema type, selecting sample pages representing each template variation and attribute combination, validating sample pages thoroughly identifying template-level patterns, and extrapolating errors found in samples to affected population estimating total impact. Efficient sampling requires 20 to 50 pages per template variation providing confidence that detected errors represent systematic problems whilst avoiding redundant validation of thousands of pages with identical markup patterns. Template-based sampling enables 10,000-page implementations to be comprehensively validated through testing of several hundred representative pages rather than requiring validation of complete catalog.

Error categorisation and prioritisation organises identified problems by severity and business impact guiding correction sequencing. Categorisation includes separating critical errors preventing rich snippet eligibility from warnings suggesting improvements, identifying template-level errors affecting hundreds or thousands of pages versus isolated page-specific problems, prioritising corrections based on page traffic and conversion value, and distinguishing syntax errors from semantic accuracy problems requiring different resolution approaches. Error prioritisation prevents wasting effort correcting minor warnings on low-traffic pages whilst critical errors affecting high-value pages remain unaddressed. Australian businesses should fix critical template-level errors affecting rich snippet eligibility on high-traffic pages first before addressing minor issues on less important pages ensuring maximum business impact from limited development resources.

Iterative correction and revalidation ensures fixes actually resolve problems without creating new errors through incomplete corrections or unintended consequences. Correction process includes implementing fixes in development environments, validating corrections in staging before production deployment, monitoring Search Console and validation tools post-deployment confirming problems resolved, and conducting regression testing ensuring fixes didn't break previously working markup. Iterative approach prevents the common pattern where attempted fixes create new problems requiring additional correction cycles consuming more effort than systematic careful implementation would have required initially. Australian businesses should validate after every correction rather than accumulating fixes and validating in large batches that make debugging failure causes extremely difficult.

Ongoing monitoring cadence maintains validation quality after initial implementation preventing degradation through subsequent website changes. Monitoring includes weekly Search Console review checking for new enhancement report errors, monthly automated crawling validating sample pages from each template type, quarterly comprehensive validation repeating initial audit process, and real-time validation for high-value page updates before publication. Continuous monitoring catches problems early when they affect limited pages rather than discovering after weeks or months when thousands of pages contain errors requiring extensive correction. Australian businesses treating validation as ongoing discipline rather than one-time project maintain consistently high schema quality that one-time validation followed by neglect cannot achieve.

Common Structured Data Errors and Resolution

Specific error patterns appear frequently across implementations requiring recognition and systematic correction rather than case-by-case debugging.

Missing required properties represent the most common validation failure where schema markup omits properties that Google requires for rich result eligibility. Product schema frequently missing aggregateRating or review properties preventing rating stars in search results, missing offers preventing price display, or missing availability preventing stock status indication. Article schema commonly missing datePublished or dateModified preventing proper content freshness signals, missing author or publisher preventing byline display, or missing image preventing visual content in search results. Resolution requires identifying which properties are actually required versus merely recommended for specific rich result types, adding properties through template modifications ensuring all future pages include requirements, and backfilling missing properties for existing pages either manually for small counts or programmatically for large datasets.

Property value format errors occur when required properties are present but contain incorrectly formatted values. Common format errors include dates not following ISO 8601 format standards, prices missing currency codes or using incorrect formats, URLs containing relative rather than absolute paths, and numeric values formatted as strings rather than numbers. Format errors particularly affect date properties in Article and Event schema where Google requires YYYY-MM-DD or complete ISO 8601 formats rejecting alternative date representations that humans interpret correctly but structured data parsing fails. Resolution includes implementing proper formatting in templates generating schema, validating format correctness through test cases, and data cleansing for existing implementations converting incorrect formats to proper specifications.

Content markup mismatches create schema that's technically valid but semantically inaccurate because markup data doesn't match visible page content. Product schema declaring "In Stock" whilst page displays "Out of Stock" to users, prices in schema differing from displayed prices, business hours in LocalBusiness schema contradicting hours shown on pages, and review counts in aggregateRating not matching actual review quantities displayed all represent content mismatches that validation tools cannot detect but Google identifies through content comparison algorithms potentially triggering rich snippet removal despite technical validity. Resolution requires implementing dynamic schema generation pulling data from same sources that generate visible content ensuring synchronization, regular auditing comparing markup against content identifying mismatches, and fixing data source problems providing incorrect information to both content and schema rendering.

Duplicate and conflicting markup creates confusion when multiple schema blocks on pages contain contradictory information. Multiple Product schema blocks for the same product with different prices or availability statuses, conflicting Article schema providing different publication dates, or overlapping LocalBusiness and Organization markup creating ambiguous business identity all prevent Google from confidently extracting structured data. Resolution includes auditing pages for multiple schema blocks, removing redundant markup when single authoritative implementation suffices, consolidating information when multiple blocks attempt to describe the same entity, and ensuring plugin-generated schema doesn't conflict with custom implementations by disabling redundant sources.

JavaScript rendering failures affect client-side generated schema that validation tools see but search engines sometimes don't. JavaScript errors preventing schema generation, slow rendering causing schema to load after search engine crawl timeouts, and conditional rendering that sometimes succeeds but occasionally fails create intermittent validation problems appearing correct in testing but failing in production. Resolution requires implementing server-side rendering for critical schema ensuring search engines reliably access it, comprehensive JavaScript error monitoring catching rendering failures affecting schema generation, and fallback mechanisms ensuring schema generates even when JavaScript fails or loads slowly due to network conditions.

Nesting and relationship errors create invalid structures when schema types are improperly nested or referenced. Product schema incorrectly nesting Review as top-level property rather than within aggregateRating, Article schema referencing author through incorrect property structure, and Organization schema misusing address versus location properties all create structural validity issues. Resolution includes reviewing schema.org specifications for proper nesting requirements, validating relationship properties reference appropriate schema types, and restructuring markup to follow documented patterns rather than guessing at logical but technically incorrect structures.

.avif)

Maven Marketing Co. Structured Data Testing Services

Professional validation services ensure Australian businesses maintain schema quality across enterprise implementations through systematic testing and ongoing monitoring.

Comprehensive schema audit identifies all structured data across websites documenting implementation quality and improvement opportunities. Audit services include complete website crawling extracting all schema markup regardless of implementation method, validation against Google's specific requirements for rich snippet eligibility, error categorisation by severity and business impact, template pattern analysis identifying systematic versus isolated errors, and competitive benchmarking comparing implementation quality against industry peers. Comprehensive audits provide detailed reports prioritising corrections by potential traffic and conversion impact rather than arbitrary error counts enabling businesses to focus development resources on highest-return improvements.

Template-level validation and correction addresses systematic errors affecting multiple pages through single fixes rather than page-by-page corrections. Template services include identifying distinct templates within each schema type, validating template markup patterns, implementing corrections at template level automatically fixing hundreds or thousands of affected pages, regression testing ensuring corrections don't break other functionality, and documentation enabling internal teams to maintain template quality through future updates. Template-level approach delivers exponentially more efficient error resolution than page-by-page corrections that would never scale to enterprise implementations.

Ongoing monitoring and alerting maintains schema quality after initial audit and correction preventing degradation through subsequent changes. Monitoring services include weekly Search Console enhancement report review identifying new errors, monthly automated validation checking sample pages from each template type, immediate alerts when critical errors emerge affecting high-traffic pages, quarterly comprehensive revalidation ensuring sustained quality, and priority escalation when error rates exceed acceptable thresholds requiring immediate attention. Continuous monitoring prevents the pattern where initial schema implementation maintains quality briefly before gradual degradation through subsequent website updates that nobody monitors for schema impact.

Rich snippet performance tracking connects schema implementation quality to actual search result appearances and organic traffic impact. Performance tracking includes Search Console data analysis showing rich snippet generation rates by page type, click-through rate comparison between pages with and without rich results, competitive monitoring tracking competitor rich snippet appearances for target queries, A/B testing measuring traffic and conversion impact of rich results, and ROI calculation quantifying business value justifying ongoing schema maintenance investment. Performance tracking demonstrates business value beyond technical validation scores ensuring schema investment receives appropriate prioritisation within overall marketing strategy.

.avif)

Frequently Asked Questions

How can Australian businesses with tens of thousands of pages efficiently validate structured data without manually testing every URL?

Efficient scale validation requires template-based sampling combined with automated crawling rather than attempting exhaustive page-by-page validation. Identify distinct templates within each schema type including product categories, article formats, and location variations that share common markup patterns. Validate 20 to 50 representative pages from each template variation thoroughly testing different attribute combinations, content variations, and edge cases that template might encounter. Use automated crawling tools including Screaming Frog or custom scripts to validate schema presence and basic syntax across complete website whilst focusing detailed validation on template samples. Extrapolate errors discovered in template samples to affected page populations estimating total impact. This approach enables 50,000-page implementations to be comprehensively validated through testing several hundred representative pages rather than requiring validation of complete catalog consuming thousands of hours. Monitor Search Console enhancement reports identifying page types with high error rates warranting additional template validation. Prioritise validation effort on high-traffic, high-conversion page types where schema impact on organic performance is greatest rather than treating all page types equally regardless of business value.

What's the difference between passing Google's Rich Results Test and actually generating rich snippets in search results?

Rich Results Test confirms technical eligibility meaning markup meets Google's structural requirements and could generate rich results if displayed. However, Google makes dynamic decisions about whether to actually show rich snippets based on quality signals, competitive factors, query context, and device type that validation tools don't assess. Factors preventing rich snippet display despite passing validation include insufficient quality signals such as lacking reviews or ratings, competitive dynamics where competing pages have stronger signals, query intent where Google determines rich results aren't helpful, policy violations detected through manual review but not automated validation, and content-markup mismatches where visible page content contradicts schema declarations. Australian businesses should validate both technical eligibility through Rich Results Test and actual rich snippet generation through Search Console enhancement reports and manual SERP monitoring. Large gaps between validation passing and actual rich snippet appearances indicate quality issues beyond technical implementation requiring content improvements, competitive strengthening, or semantic accuracy corrections that validation tools alone don't reveal.

How should Australian businesses handle schema validation when using multiple plugins or systems that generate structured data potentially creating conflicts?

Multiple schema sources frequently create duplicate or conflicting markup requiring audit and consolidation. First, identify all markup sources including theme-generated schema, SEO plugin implementations, CMS native functionality, third-party integrations, and custom implementations documenting what each source generates. Second, validate pages for duplicate schema blocks using validation tools that display all extracted structured data revealing overlaps and conflicts. Third, prioritise sources establishing which implementation should be authoritative for each schema type based on accuracy, completeness, and maintainability. Fourth, disable redundant sources removing conflicting markup and ensuring single authoritative implementation per schema type. Fifth, fill gaps where disabled sources provided properties that authoritative sources don't by enhancing the remaining implementation rather than maintaining multiple partial sources. Australian businesses should prefer custom implementations or comprehensive SEO plugins over theme-generated schema because themes rarely provide complete control enabling proper optimisation. When using plugins, configure carefully disabling automatic generation for schema types you implement manually preventing conflicts whilst leveraging plugin generation for types you don't manually optimise. Test thoroughly after consolidation ensuring removed sources didn't provide properties that remaining implementation omits creating inadvertent degradation through incomplete consolidation.

What should Australian businesses do when schema validates perfectly in testing tools but Search Console shows errors or warnings?

Validation tool success combined with Search Console errors indicates several possible problems requiring investigation beyond surface-level testing. First, verify whether Search Console errors affect same pages that validation tested or different page subsets by exporting affected URLs from Search Console and validating those specific pages rather than only testing homepage or high-traffic pages that might not represent complete implementation. Second, check validation tool JavaScript rendering because Search Console reflects what Googlebot sees whilst some validation tools process only initial HTML missing client-side generated markup. Third, investigate intermittent errors where schema sometimes generates correctly but occasionally fails due to JavaScript errors, data source failures, or conditional rendering that testing doesn't trigger. Fourth, review semantic accuracy beyond syntax validation checking whether prices match displayed prices, availability reflects actual inventory, and dates follow proper formats because Search Console detects content-markup mismatches that syntax validation cannot identify. Fifth, consider manual review penalties where Search Console flags policy violations including fake reviews or misleading content that automated validation tools don't detect. Diagnose systematically by validating exact URLs Search Console identifies as problematic, using browser developer tools inspecting rendered markup, and comparing markup against visible content confirming semantic accuracy that syntax validation alone cannot assess.

How frequently should Australian businesses revalidate structured data after initial implementation and correction?

Validation frequency depends on website update frequency and structured data complexity requiring more frequent checking for dynamic implementations than static sites. Minimum monitoring includes weekly Search Console enhancement report review checking for new errors or warnings, monthly automated sampling validation checking representative pages from each template type, and quarterly comprehensive revalidation repeating initial audit process across complete implementation. Increase frequency for e-commerce sites with frequent product updates, news sites publishing continuously, and implementations using complex JavaScript rendering where changes risk breaking markup. Implement real-time validation for critical pages before publication using pre-deployment testing catching errors before reaching production. Consider continuous monitoring through custom scripts validating sample pages daily identifying problems within hours rather than waiting weeks for Search Console reporting. Australian businesses treating schema validation as ongoing discipline rather than one-time project maintain consistently high quality that annual revalidation cannot achieve because website changes throughout the year continuously risk introducing errors that immediate detection prevents from affecting thousands of pages before discovery.

Can Australian businesses rely exclusively on Google Search Console for structured data validation, or are additional validation tools necessary?

Search Console provides essential field data showing actual rich snippet performance but cannot replace proactive validation tools for several reasons. Search Console reporting delays mean problems appear days or weeks after implementation changes rather than immediately enabling preventive testing before production deployment. Search Console focuses on rich snippet eligibility not comprehensive schema validation missing errors that don't immediately prevent rich results but indicate quality problems. Search Console doesn't provide detailed debugging information for complex errors requiring additional tools revealing exact property issues, format problems, or syntax errors. Search Console samples pages rather than validating complete implementations potentially missing errors affecting only specific page subsets or templates. Optimal strategy combines Search Console field data monitoring actual search result behavior with proactive validation tools including Rich Results Test for final validation, Screaming Frog for scale validation across complete websites, and Schema Markup Validator for detailed syntax checking. Use proactive validation during development and before deployment preventing problems, then monitor Search Console confirming production behavior matches testing expectations. Search Console should be primary data source for understanding actual search impact whilst validation tools should be primary data sources for identifying and debugging specific technical problems that Search Console reports reveal without detailed diagnostic information.

What structured data testing should Australian businesses conduct specifically for mobile versus desktop given mobile-first indexing?

Mobile-first indexing means Google primarily uses mobile page versions for indexing and ranking making mobile schema validation critical regardless of desktop implementation. However, validation priorities differ between platforms reflecting technical and UX differences. For mobile validation specifically test JavaScript rendering because mobile devices may time out before client-side schema generates, responsive design impacts on schema visibility because content hidden on mobile should exclude corresponding markup, page speed effects on schema generation because slow mobile performance may prevent markup rendering completely, and touch interaction markup for events and actions that desktop pointer events don't represent. For desktop validation confirm visual parity ensuring desktop pages aren't missing schema that mobile provides, validate tablet-specific implementations separately because they sometimes use different templates from phone and desktop, and test cross-device consistency ensuring the same entity represented on mobile and desktop uses identical schema identifiers enabling Google to understand they represent the same business, product, or article. Australian businesses should prioritise mobile validation because it determines ranking whilst treating desktop validation as consistency check ensuring both versions provide equivalent structured data preventing confusion about entity identity that inconsistent markup creates.

Professional Structured Data Testing Ensures Rich Snippet Success

Structured data testing at enterprise scale transforms schema implementation from hopeful deployment to validated system generating reliable rich snippet appearances through systematic validation identifying and correcting errors before they eliminate search feature eligibility and waste development investment.

The testing frameworks outlined in this guide including automated validation tools, template-level sampling strategies, ongoing monitoring processes, and error categorization methodologies provide comprehensive foundation for Australian businesses to maintain schema quality across thousands of pages rather than assuming implementation quality based on limited manual spot-checking that enterprise implementations make impractical.

Australian businesses working with Maven Marketing Co. benefit from professional structured data audits revealing complete error inventories across implementations, template-level corrections fixing systematic problems efficiently, and ongoing monitoring maintaining quality through website evolution ensuring sustained rich snippet generation that drives organic traffic improvements justifying schema implementation investment.

Ready to validate structured data implementation across your complete website ensuring consistent rich snippet eligibility and search feature qualification? Maven Marketing Co. provides comprehensive structured data testing services including enterprise-scale validation audits, template-level error correction, ongoing monitoring, and rich snippet performance tracking ensuring your schema implementation delivers the search visibility advantages that proper validation maintains whilst incomplete testing undermines.