Key Takeaways

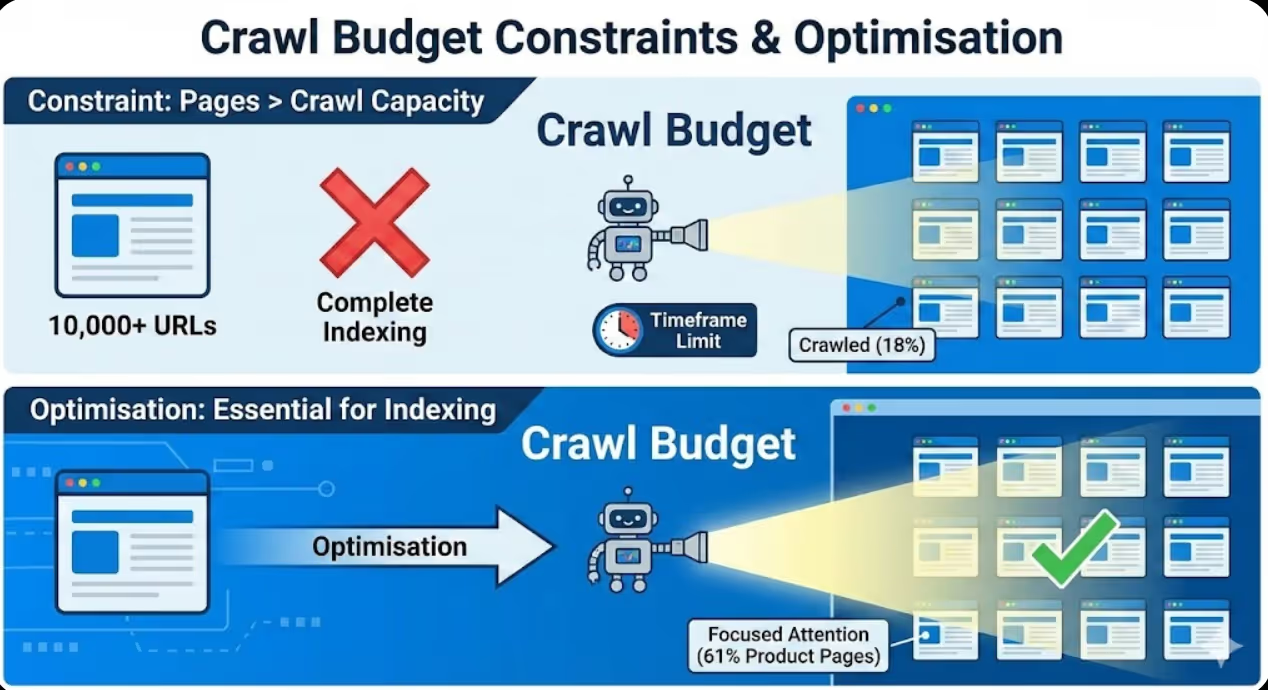

- Crawl budget constraints affect websites with approximately 10,000+ URLs where total page count exceeds what search engines will crawl within practical timeframes, making optimisation essential for complete indexing

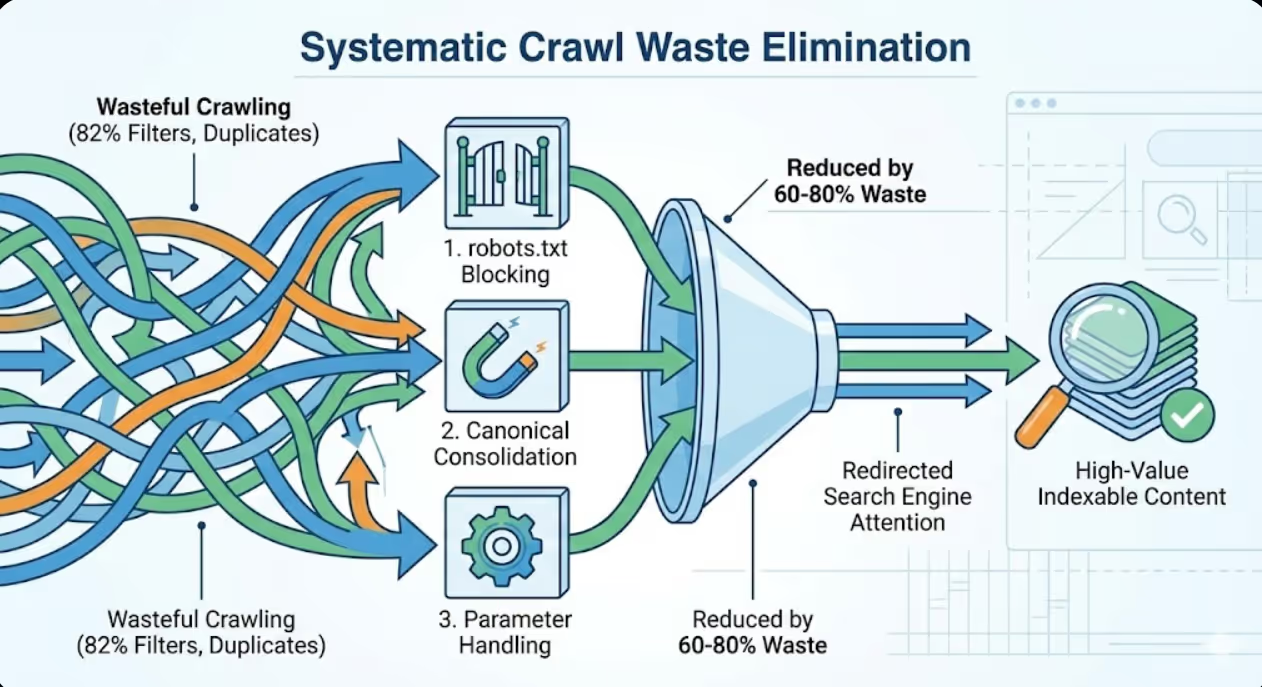

- Systematic crawl waste elimination through robots.txt blocking, canonical consolidation, and parameter handling can reduce wasteful crawling by 60-80% redirecting search engine attention toward high-value indexable content

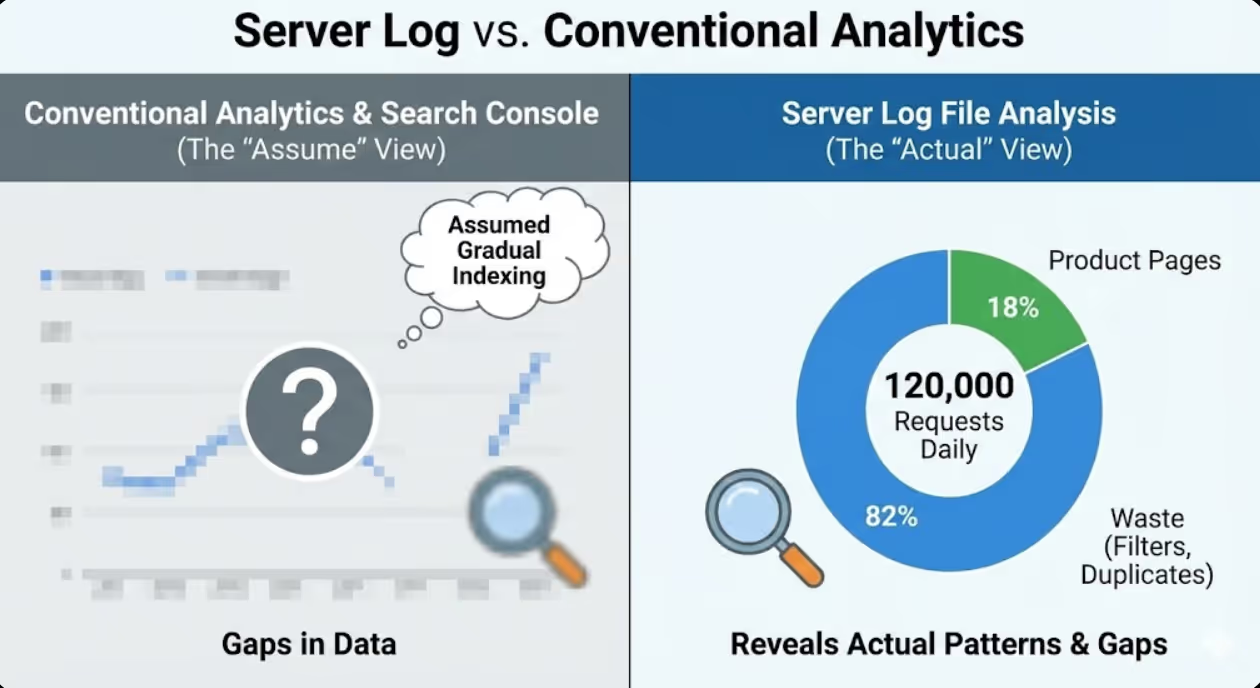

- Server log file analysis reveals actual crawling behaviour patterns exposing gaps between intended and actual crawl distribution that conventional analytics and Search Console data cannot adequately diagnose

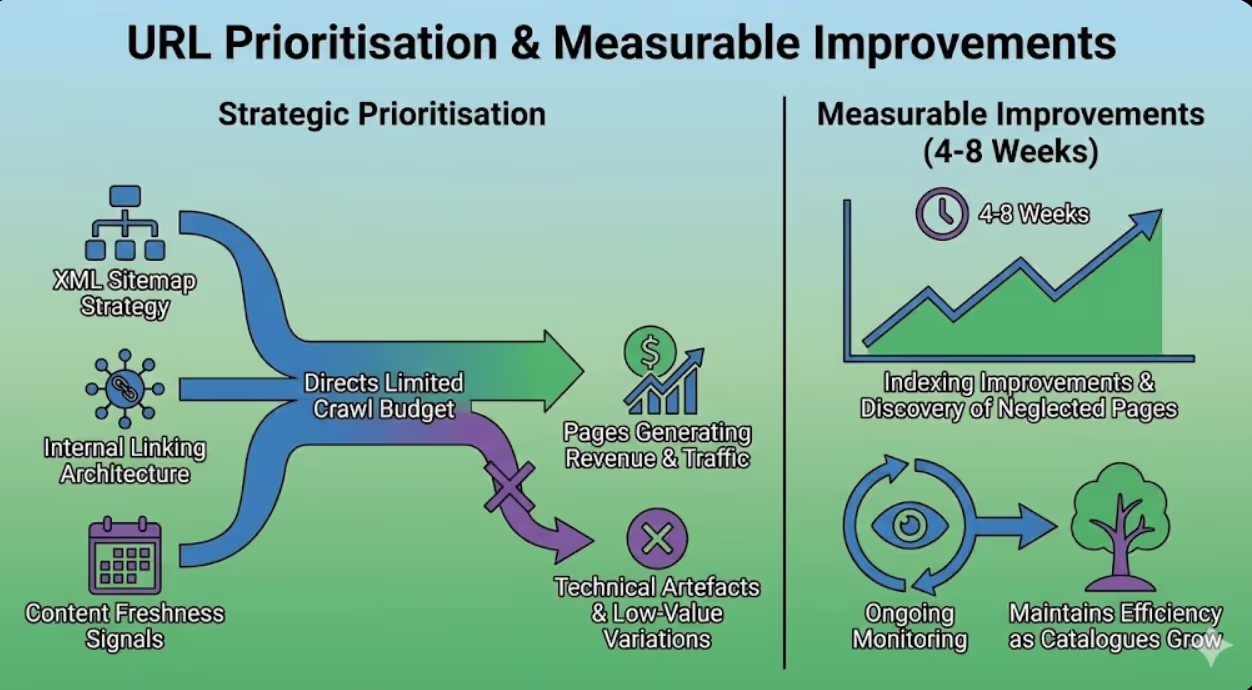

- URL prioritisation through XML sitemap strategy, internal linking architecture, and strategic content freshness signals directs limited crawl budget toward pages generating revenue and traffic rather than technical artefacts and low-value variations

- Crawl budget optimisation delivers measurable indexing improvements within 4-8 weeks as search engines discover previously neglected pages whilst ongoing monitoring maintains efficiency as catalogues grow and evolve

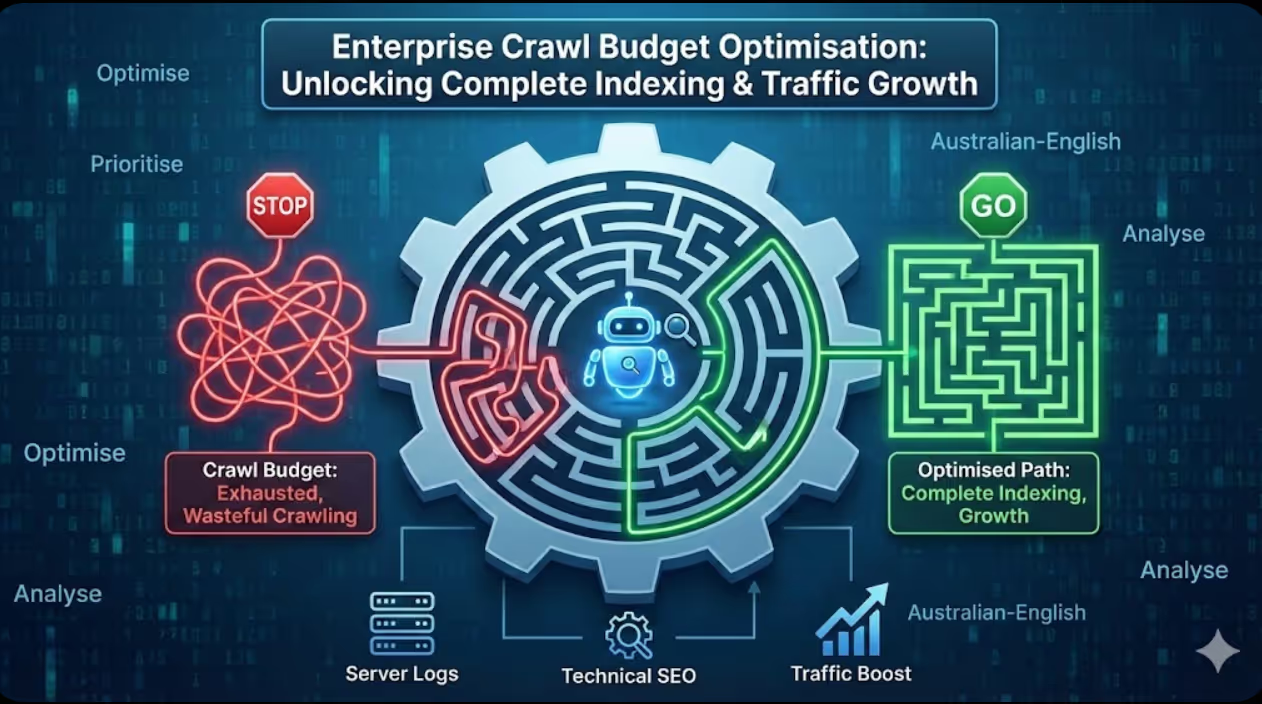

An Australian e-commerce retailer with 85,000 product pages experienced persistent indexing problems where only 42,000 pages appeared in Google's index despite months of technical SEO efforts including proper sitemaps, clean internal linking, and no obvious technical barriers preventing crawling. The SEO team assumed Google was gradually indexing the catalogue and waited for natural indexing progression that never materialised.

Server log analysis revealed the actual problem. Googlebot was making approximately 120,000 requests daily to the website but only 18% of those requests targeted actual product pages warranting indexing. The remaining 82% crawled faceted navigation filter combinations creating millions of duplicate URL variations, paginated archive sequences extending hundreds of pages deep despite limited content justifying such pagination, session ID parameters creating infinite URL variations, old blog archive pages from years earlier consuming disproportionate attention, and excessive recrawling of already-indexed homepage and high-level category pages that changed infrequently.

According to research from DeepCrawl, enterprise websites waste an average of 51% of crawl budget on non-indexable pages including duplicates, redirects, and blocked content, demonstrating that crawl efficiency problems affect the majority of large sites requiring systematic optimisation rather than representing isolated implementation failures.

Maven Marketing Co. implemented comprehensive crawl budget optimisation including robots.txt directives blocking filter parameter patterns preventing wasteful crawling, canonical tag implementation consolidating duplicate variations, URL parameter configuration in Search Console instructing Google how to handle filtering and pagination, strategic noindex implementation on infinite crawl spaces, internal linking architecture improvements prioritising product pages, XML sitemap optimisation including only indexable high-value pages, and server performance improvements enabling faster crawling within allocated budget.

Within six weeks, wasteful crawling decreased 73% as Googlebot stopped discovering and crawling low-value filter combinations and parameter variations. Crawl distribution shifted dramatically with product pages receiving 61% of crawl requests compared to previous 18%. Indexed page count increased from 42,000 to 76,000 within twelve weeks as newly efficient crawling discovered previously neglected products. Organic traffic increased 47% as the additional 34,000 indexed products began generating search visibility and conversions that their previous non-indexed status had prevented.

The transformation didn't require new content, additional links, or increased marketing spend. It required understanding precisely how search engines were wasting crawl budget and implementing technical optimisations redirecting that budget toward revenue-generating pages that had existed all along but remained undiscovered due to inefficient crawling.

Understanding Crawl Budget Fundamentals

Crawl budget optimisation requires foundational understanding of what crawl budget means, how search engines allocate it, and why it matters for enterprise websites differently than small sites.

Crawl budget definition describes the number of URLs search engines will crawl on a website within a given timeframe, determined by crawl rate limits preventing server overload and crawl demand reflecting how much search engines want to crawl based on perceived site quality, update frequency, and authority. Google doesn't publish specific crawl budget allocations for individual websites but enterprise sites typically receive anywhere from tens of thousands to millions of crawl requests daily depending on size, authority, and perceived value. Crawl budget becomes constraining when total URL count exceeds what search engines will crawl within reasonable timeframes creating competition between pages for limited crawling resources.

When crawl budget matters depends primarily on website size with different thresholds affecting different businesses. Websites with under 1,000 pages rarely face crawl budget constraints because search engines can efficiently crawl complete small sites multiple times weekly without resource limitations. Websites with 1,000 to 10,000 pages occasionally encounter crawl budget issues particularly if technical problems create excessive duplicate content or infinite crawl spaces. Websites with 10,000 to 100,000 pages consistently face crawl budget constraints requiring optimisation ensuring complete indexing. Websites exceeding 100,000 pages almost certainly require sophisticated crawl budget management because search engines cannot feasibly crawl complete massive catalogues frequently making URL prioritisation essential. Enterprise businesses should assess crawl budget optimisation priority based on the ratio between total URL count and indexed page count in Search Console with substantial gaps indicating crawl budget constraints preventing complete indexing.

Crawl rate versus crawl demand represent the two factors determining effective crawl budget allocation. Crawl rate limits how many requests per second search engines make preventing server overload and ensuring site performance doesn't degrade from crawler traffic. Crawl demand reflects how much search engines want to crawl based on quality signals, update frequency, backlink acquisition, and authority. High authority sites with frequent updates receive higher crawl demand allowing more requests. Low quality sites with infrequent updates receive lower crawl demand regardless of server capacity to handle more. Optimisation addresses both dimensions by improving server performance supporting higher crawl rates whilst building authority and demonstrating content freshness increasing crawl demand beyond what rate limits alone would provide.

Search engine crawler differences require understanding that Google, Bing, and other search engines allocate crawl budget independently with different priorities and capacities. Googlebot typically allocates substantially more crawl budget than Bing or other engines reflecting Google's larger infrastructure and Australian market dominance. However, optimisations improving crawl efficiency for one engine generally benefit all engines because technical improvements including faster server response, reduced duplicate content, and cleaner URL structures universally enable more efficient crawling regardless of specific engine. Enterprise websites should prioritise Google crawl budget given its market share whilst recognising that optimisation delivers secondary benefits for other engines.

Crawl budget versus ranking clarifies that crawl budget affects indexing not ranking directly. Pages that aren't crawled cannot be indexed and therefore cannot rank regardless of optimisation quality. However, being crawled doesn't guarantee ranking because thousands of ranking factors beyond crawling determine search result positions. Crawl budget optimisation ensures pages have the opportunity to rank by guaranteeing indexing whilst separate ranking optimisations determine actual positions achieved. Enterprise websites should not expect crawl budget optimisation alone to improve rankings for already-indexed pages whilst recognising that previously unindexed pages gaining indexing through improved crawling will begin generating traffic they couldn't produce when invisible in search indexes.

Google's official guidance on crawl budget emphasises that most websites don't need to worry about crawl budget whilst acknowledging that large sites, sites with rapidly changing content, and sites generating many URLs through parameters do face genuine constraints. Google's documentation specifically recommends focusing on site speed, using robots.txt strategically, avoiding duplicate content, and maintaining clean URL structures as primary crawl budget optimisation strategies applicable to enterprise implementations.

Identifying Crawl Budget Waste Patterns

Systematic identification of crawl waste reveals specific problems consuming budget without providing indexing value enabling targeted optimisation addressing highest-impact issues first.

Server log file analysis methodology provides the definitive data source revealing actual crawling behaviour versus assumptions about how search engines interact with websites. Log analysis requires accessing raw server logs covering at least 30 days capturing complete crawling patterns, filtering specifically for search engine crawler requests separating Googlebot from user traffic, categorising crawled URLs by type including products, categories, filters, pagination, parameters, and other classifications, calculating crawl percentage allocated to each URL category, and comparing crawl distribution against business value priority revealing misalignment between crawler attention and strategic importance. Enterprise websites should establish regular log analysis cadences reviewing crawling patterns monthly or quarterly identifying emerging waste patterns requiring intervention before they consume substantial resources.

Faceted navigation crawl waste represents the most common crawl budget problem for e-commerce enterprises where filter combinations create millions of possible URLs from thousands of actual products. Faceted navigation analysis includes identifying filter parameter patterns in server logs, calculating total filter combination crawl requests, determining what percentage of crawl budget filters consume, analysing whether filtered pages are being indexed despite canonical directives, and quantifying the opportunity cost where filter crawling prevents product page discovery. Faceted navigation optimisation through robots.txt blocking, canonical consolidation, and URL parameter handling typically represents the highest-impact crawl budget improvement for e-commerce platforms because dramatic waste reduction occurs through relatively simple technical implementations.

Pagination crawl waste emerges when paginated sequences extend far beyond meaningful content depth creating crawl sinks where search engines follow pagination links through hundreds of pages. Pagination analysis includes identifying paginated URL patterns, determining maximum page depth that crawlers reach, calculating crawl requests consumed by deep pagination, and assessing whether pagination depth is justified by content volume or represents infinite sequences. Pagination extending beyond page 20 or 30 rarely serves legitimate user needs whilst consuming crawl budget that product and category pages require. Pagination optimisation through view-all alternatives, strategic rel=next/prev implementation, or maximum pagination limits prevents crawl waste whilst maintaining necessary pagination for genuine large result sets.

URL parameter proliferation creates infinite crawl spaces when tracking parameters, session IDs, or sorting options append to URLs creating duplicate content with distinct URLs that search engines attempt to crawl exhaustively. Parameter analysis includes extracting all parameters appearing in server logs, identifying which parameters change content versus only affect presentation or tracking, calculating crawl volume consumed by parameter variations, and determining whether parameter handling is configured in Search Console instructing Google how to treat each parameter type. Parameter optimisation through canonical tags, URL parameter configuration, and strategic robots.txt blocking prevents wasteful crawling of tracking and sorting variations that create duplicates without unique content value.

Redirect chain inefficiency wastes crawl budget when redirects require multiple hops from source URLs to final destinations forcing crawlers to consume multiple requests reaching content that direct links would access with single requests. Redirect analysis includes identifying redirect chains in server logs where requests result in 301 or 302 redirects to intermediate URLs, calculating average redirect chain length, quantifying total crawl requests wasted on redirects versus final content, and mapping internal links that point to redirecting URLs rather than final destinations. Redirect optimisation through flattening chains connecting source directly to destination, updating internal links pointing to current URLs, and removing unnecessary intermediate redirects improves crawl efficiency enabling more product and category page crawling within the same total request budget.

Orphan page crawling indicates pages receiving crawl attention despite lacking internal links suggesting crawlers find them through external links, sitemaps, or historical knowledge whilst strategic pages lack adequate crawling due to poor internal linking. Orphan analysis includes identifying pages crawled despite lacking internal links, determining crawl frequency for orphaned versus well-linked pages, analysing whether orphaned pages deserve crawling or represent low-value legacy content, and comparing crawl distribution against actual internal linking architecture. Orphan patterns often reveal that old blog posts, discontinued products, or legacy content receives disproportionate crawling due to historical external links whilst current strategic pages receive inadequate attention due to deep internal linking architecture preventing discovery.

Strategic Crawl Budget Allocation

Optimising crawl budget requires not only eliminating waste but actively directing search engine attention toward highest-value pages through strategic technical signals.

XML sitemap prioritisation guides search engines toward most important URLs through strategic sitemap structure, update frequency signals, and priority values that collectively influence crawl allocation decisions. Sitemap optimisation includes segmenting sitemaps by content type creating separate files for products, categories, blog content, and other types enabling independent update frequency signalling, excluding low-value pages from sitemaps entirely preventing their discovery through this channel, setting appropriate lastmod dates reflecting actual content updates rather than template changes that don't warrant recrawling, using priority values to distinguish truly important pages though Google states they largely ignore priority in favour of other signals, and updating sitemaps immediately when high-value pages change or publish rather than on fixed schedules. According to Google's sitemap guidelines, sitemaps help search engines find URLs but don't guarantee crawling or indexing, requiring combination with other optimisation strategies for maximum effectiveness.

Internal linking architecture provides the most powerful signal about page importance because search engines interpret link structure as editorial indication of which pages matter most. Internal linking optimisation includes ensuring all important pages reach within three clicks from homepage reducing crawl depth requirements, building strategic internal links from high-authority pages to important deep catalogue pages accelerating their discovery, implementing breadcrumb navigation providing clear hierarchical context, creating hub pages linking to important product or content collections, avoiding excessive internal links that dilute individual link value, and using descriptive anchor text providing context about linked page content. Internal linking improvements simultaneously benefit crawl budget allocation and ranking potential making it dual-purpose optimisation delivering multiple SEO advantages.

Content freshness signals increase crawl demand by demonstrating that pages update frequently warranting more frequent recrawling. Freshness optimisation includes updating high-value pages regularly with genuinely new content rather than cosmetic changes that don't provide value, implementing proper lastmod dates in sitemaps and HTTP headers reflecting actual updates, publishing new content consistently establishing patterns that search engines anticipate, refreshing old content that still ranks adding updated information extending its relevance, and avoiding fake freshness signals through date manipulation that doesn't reflect actual content changes potentially triggering algorithmic penalties. Genuine content freshness increases crawl demand whilst fake freshness attempts risk reducing crawl budget allocation when search engines detect manipulation.

Server performance optimisation enables higher crawl rates by reducing response times allowing search engines to crawl more URLs within the same timeframe without exceeding rate limits. Performance optimisation includes implementing robust server-side caching reducing dynamic page generation overhead, upgrading hosting resources when server CPU or memory constraints slow responses, optimising database queries that delay page generation, implementing CDN delivery for static assets, and monitoring server response times specifically during crawler visits identifying performance bottlenecks affecting crawl efficiency. Faster server response times directly increase effective crawl budget because search engines can retrieve more pages per second without violating rate limits that protect server performance.

Strategic robots.txt usage prevents wasteful crawling whilst avoiding overly aggressive blocking that accidentally prevents important page discovery. Robots.txt optimisation includes blocking known low-value URL patterns including filter parameters and session IDs, preventing crawling of admin areas and development directories, avoiding blocking of resources that pages require for rendering including CSS and JavaScript that Googlebot needs for proper content interpretation, maintaining robots.txt simplicity preventing complex patterns that introduce errors, and testing robots.txt changes thoroughly before deployment preventing accidental blocking of important content. Robots.txt provides powerful crawl budget protection when used strategically whilst creating catastrophic indexing problems when misconfigured blocking important pages or required resources.

Crawl delay configuration in robots.txt enables manual crawl rate control though Google largely ignores crawl-delay directives preferring to manage rates dynamically through Search Console settings. Search Console crawl rate control allows requesting Google to crawl slower during peak traffic periods preventing crawler activity from affecting user experience whilst other search engines including Bing respect robots.txt crawl-delay directives enabling platform-specific rate management. Enterprise websites should use Search Console for Google-specific rate management whilst implementing robots.txt crawl-delay for other engines, being cautious about overly aggressive rate limiting that reduces total crawl volume and delays indexing of new content.

Technical Infrastructure for Crawl Efficiency

Server and hosting infrastructure directly affects crawl budget efficiency through response times, reliability, and resource handling that collectively determine how efficiently search engines can crawl allocated budgets.

Time to First Byte optimisation reduces the initial server response delay that affects crawl efficiency because every millisecond saved in TTFB enables proportionally more crawling within allocated timeframes. TTFB optimisation includes implementing robust server-side caching serving frequently requested content from cache, using reverse proxy caching like Varnish for dynamic content, optimising application code reducing computation required for page generation, implementing database query optimisation eliminating slow queries, and upgrading server resources when current capacity creates bottlenecks. TTFB under 200 milliseconds enables efficient crawling whilst TTFB exceeding 500 milliseconds significantly reduces crawl capacity within the same time allocation as search engines wait longer for each response.

HTTP/2 and HTTP/3 implementation improves crawl efficiency through multiplexing enabling multiple resource requests over single connections reducing connection overhead that HTTP/1.1 requires for parallel requests. Modern protocol implementation includes configuring servers to support HTTP/2 or HTTP/3, ensuring SSL/TLS certificates are properly installed because HTTP/2 requires HTTPS, testing protocol support through browser developer tools, and monitoring whether search engine crawlers actually use modern protocols versus falling back to HTTP/1.1 when encountering implementation problems. HTTP/2 primarily benefits crawling of sites with many resources per page whilst providing modest efficiency improvements for simple pages.

CDN implementation reduces geographic latency enabling faster crawling regardless of crawler location whilst distributing load across edge servers preventing origin server overload during peak crawling periods. CDN optimisation includes implementing CDN for static assets at minimum whilst considering full-page caching for appropriate content types, configuring proper cache headers enabling effective CDN utilisation, ensuring CDN doesn't interfere with crawler access through overly aggressive rate limiting or challenge pages, and monitoring CDN performance specifically for crawler traffic identifying any issues affecting search engine access. CDN provides dual benefits of improved crawl efficiency through faster responses whilst protecting origin servers from crawl-induced load spikes.

Server capacity planning ensures adequate resources support crawler traffic without degrading user experience through resource exhaustion. Capacity planning includes monitoring server CPU, memory, and disk I/O during peak crawl periods, calculating resource consumption per request enabling capacity forecasting as catalogues grow, implementing autoscaling when using cloud infrastructure automatically adding resources during demand spikes, establishing separate crawler handling when resource constraints require prioritising user traffic over crawlers, and planning capacity headroom supporting anticipated growth rather than running at capacity limits creating performance degradation risks. Inadequate server capacity creates the worst possible scenario where crawl budget exists but server performance limits prevent utilising it effectively.

Error rate minimisation prevents crawl budget waste on broken URLs whilst maintaining search engine confidence in site quality that high error rates undermine. Error reduction includes fixing 404 errors for URLs that should exist, implementing proper 410 Gone status for intentionally removed content, addressing 500 server errors indicating technical problems, resolving timeout errors caused by slow page generation, and monitoring error rates in Search Console identifying problem patterns. High error rates signal quality problems potentially reducing crawl demand whilst fixed errors return pages to indexing consideration and restore crawler confidence encouraging continued crawling.

Log file storage and analysis infrastructure enables the ongoing monitoring that crawl budget optimisation requires providing visibility into actual crawling behaviour guiding optimisation decisions. Log infrastructure includes implementing adequate log retention storing 60 to 90 days enabling trend analysis, compressing archived logs reducing storage costs, establishing automated log processing pipelines enabling regular analysis without manual effort, integrating log analysis with alerting systems notifying teams of anomalous crawling patterns, and maintaining historical data enabling year-over-year comparison revealing long-term trends. Log analysis infrastructure investment typically costs less than the indexing problems it prevents through early detection of crawl budget issues before they affect substantial page counts.

Monitoring and Maintaining Crawl Budget Efficiency

Crawl budget optimisation requires ongoing monitoring rather than one-time fixes because website changes, catalogue growth, and search engine algorithm updates continuously affect crawling patterns.

Google Search Console crawl stats analysis provides Google's perspective on crawling activity supplementing server log analysis with search engine interpretation of observed patterns. Search Console monitoring includes reviewing weekly crawl statistics tracking request volume trends, analysing crawl requests by response type identifying error rate changes, monitoring time spent downloading pages revealing performance degradation, tracking bytes downloaded per day indicating content volume changes, and comparing current patterns against historical baselines identifying significant shifts. Search Console provides delayed reporting requiring combination with real-time log analysis for immediate problem detection whilst offering Google-specific insights that generic server logs cannot provide.

Indexed page coverage tracking reveals whether crawl budget optimisation translates to improved indexing coverage validating that crawling improvements produce intended business outcomes. Coverage monitoring includes tracking total indexed page counts through Search Console or site: queries, monitoring index coverage reports identifying pages excluded for technical reasons, analysing newly indexed pages confirming crawler discovery of previously neglected content, identifying pages dropping from index requiring investigation, and comparing indexed percentage against total sitemap submissions revealing coverage gaps. Improved crawl efficiency should manifest as increased indexed page counts within 4 to 8 weeks as search engines discover and index previously neglected pages that inefficient crawling had prevented reaching.

Crawl pattern segmentation analyses whether search engines allocate crawl budget proportional to business value or waste resources on low-priority content. Segmentation monitoring includes calculating crawl percentage by page type comparing products, categories, filters, and other segments, tracking crawl frequency for high-value versus low-value pages, identifying pages receiving excessive recrawling despite infrequent updates, discovering important pages receiving inadequate crawling, and establishing target crawl distribution aligning with revenue and traffic priorities. Crawl pattern segmentation enables quantitative assessment of whether optimisations successfully redirected crawler attention toward strategic pages versus only reducing total wasteful crawling without improving high-value page attention.

Competitive crawl benchmarking provides context about whether your crawl budget allocation is appropriate relative to similar enterprises. Competitive monitoring includes estimating competitor crawl budgets through public crawl delay announcements or industry discussions, comparing indexed page percentages against competitors with similar catalogue sizes, analysing whether competitors achieve better crawl efficiency through superior technical implementation, and identifying industry best practices that inform optimisation priorities. While direct competitor crawl data is not typically available, periodic benchmarking against industry standards and similar enterprise websites provides valuable context about relative crawl efficiency performance.

Alert configuration enables proactive problem detection rather than discovering crawl budget degradation weeks later through periodic reviews. Alert implementation includes configuring Search Console to email weekly crawl statistics and coverage reports, establishing automated log analysis scripts detecting unusual crawl pattern changes, creating alerts for server error rate increases affecting crawler access, monitoring indexed page count decreases indicating emerging indexing problems, and defining escalation procedures ensuring appropriate teams respond to alerts rather than being ignored in overwhelming notification volumes. Automated alerting enables rapid response to crawl budget problems whilst manual-only monitoring creates delays during which problems compound affecting increasing page counts.

Quarterly comprehensive reviews supplement ongoing monitoring with deep analysis identifying optimisation opportunities that daily or weekly monitoring doesn't reveal. Quarterly reviews include complete server log analysis validating crawl efficiency metrics, competitive benchmarking comparing performance against previous quarters and competitors, technical implementation audits identifying new waste patterns from recent website changes, capacity planning assessment ensuring infrastructure supports anticipated growth, and strategic priority alignment confirming crawler attention matches evolving business objectives. Quarterly cadence balances thoroughness against frequency enabling actionable insights without creating analysis overhead that prevents actual optimisation implementation.

Frequently Asked Questions

How can enterprise websites determine whether they actually have crawl budget problems requiring optimisation versus paranoia about theoretical issues?

Several clear indicators reveal genuine crawl budget constraints requiring optimisation rather than theoretical concerns about possible future problems. Calculate the ratio between total URLs in your sitemap versus indexed pages reported in Google Search Console. If indexed percentage is below 80% with substantial URL counts in the thousands or tens of thousands, crawl budget likely constrains indexing. Analyse server logs calculating what percentage of crawl requests target indexable high-value pages versus low-value duplicates, filters, or parameter variations. If less than 60% of crawls target strategic pages, crawl waste exists regardless of total crawl volume. Monitor index coverage in Search Console for "Crawled - currently not indexed" status affecting meaningful page counts suggesting Google crawls but chooses not to index due to quality signals or crawl budget exhaustion. Compare your indexed page growth rate against catalogue expansion. If catalogue grows substantially faster than indexed pages, crawl budget likely prevents keeping pace. Finally, examine whether new pages appear in search results within reasonable timeframes. If high-quality new products or content take weeks or months to appear despite being technically accessible, crawl discovery delays indicate budget constraints. Enterprise websites should evaluate all indicators rather than relying on single metrics because crawl budget problems manifest across multiple observable patterns.

What's the difference between crawl budget optimisation and simply increasing server capacity to handle more crawler traffic?

Server capacity affects crawl rate limits determining how many requests per second your infrastructure can handle, whilst crawl budget optimisation addresses crawl demand and efficiency determining whether search engines want to crawl more and whether crawling targets valuable pages. Increasing server capacity enables search engines to crawl faster without hitting rate limits but doesn't increase total crawl volume if demand limits rather than rate limits constrain crawling. Conversely, optimising crawl efficiency by eliminating waste redirects existing crawl budget toward valuable pages without requiring infrastructure investment. Optimal strategy combines adequate server performance supporting reasonable crawl rates with crawl efficiency optimisation ensuring allocated budget targets strategic pages. Many enterprise websites with crawl budget problems have adequate server capacity but waste available crawl budget on duplicates and low-value pages making efficiency optimisation higher priority than capacity expansion. Assess whether Search Console shows crawl rate limiting due to server response times suggesting capacity problems, or whether crawls target wasteful URLs suggesting efficiency problems. If server TTFB exceeds 500 milliseconds or error rates are high, address capacity and performance first. If server performs well but crawls target filters and duplicates, prioritise efficiency optimisation over capacity expansion that won't solve fundamental allocation problems.

Should enterprise websites use rel=canonical or noindex for preventing duplicate content from consuming crawl budget?

Canonical tags and noindex serve different purposes requiring selection based on specific duplicate content scenarios. Use canonical tags when duplicate variations should consolidate ranking signals to preferred versions whilst allowing search engines to crawl alternatives for verification and link discovery. Canonical is ideal for faceted navigation where filtered pages contain useful internal links to products, pagination where sequences provide crawl paths, and parameter variations where duplicate URLs exist but provide access to legitimate content. Use noindex when duplicate pages provide no value even for verification and waste crawl budget without benefit. Noindex suits infinite crawl spaces where parameter combinations create unlimited duplicates, extremely deep pagination beyond useful content, and low-quality auto-generated variations. Critically, understand that noindex prevents indexing but not crawling consuming crawl budget whilst blocking discovery and indexing. Canonical allows crawling whilst consolidating signals. Robots.txt blocking prevents crawling entirely saving maximum budget but also prevents link discovery through blocked pages. For pure crawl budget optimisation, robots.txt blocking is most effective. For balancing crawl budget with link equity flow and verification, canonical is superior. For preventing indexing whilst allowing verification crawling, noindex is appropriate. Most enterprise websites should primarily use canonical for duplicates with strategic robots.txt blocking for clearly wasteful patterns like filter parameters and selective noindex for specific problem areas rather than relying exclusively on any single approach.

How long does crawl budget optimisation take to show results, and what metrics indicate whether optimisation is working?

Initial crawl pattern improvements appear within 1-2 weeks as search engines encounter robots.txt blocks, canonical tags, and parameter handling instructions during regular crawling, but complete indexing improvements typically require 4-8 weeks as search engines gradually discover and index previously neglected pages. Monitor several leading and lagging indicators tracking optimisation progress. Leading indicators appearing within days include crawl volume reduction in server logs as blocked patterns stop consuming budget, crawl distribution shift in logs showing increased percentage targeting products and categories, Search Console showing reduced crawled-not-indexed pages as duplicates stop being discovered, and fewer URLs being added to Google's known URL count as parameter discovery stops. Lagging indicators appearing within weeks include increased indexed page counts in Search Console coverage reports, more pages appearing in site: searches, new previously unindexed URLs appearing in Search Console performance reports generating impressions and clicks, and ultimately organic traffic increases as newly indexed pages begin ranking and generating visibility. Enterprise websites should expect crawl distribution improvements within two weeks validating optimisation effectiveness whilst full indexing and traffic benefits may take two to three months materialising. Patience is essential because indexing is iterative process where improvements compound over successive crawl cycles rather than occurring instantaneously after optimisation deployment.

Can enterprise websites request Google to crawl their sites faster or allocate more crawl budget?

Google provides limited manual control over crawl budget through Search Console settings but generally manages crawl rates algorithmically based on site performance and perceived value rather than honouring direct budget increase requests. Search Console allows requesting crawl rate decreases during server maintenance or migration periods to prevent crawl activity from affecting operations. However, requesting crawl rate increases typically has no effect because Google determines appropriate rates based on server performance, site quality, update frequency, and crawl demand rather than site owner preferences. The most effective approach to increasing crawl budget is improving factors that Google's algorithms consider including site speed reducing response times, content freshness through regular meaningful updates, crawl efficiency eliminating waste enabling more valuable page discovery, site authority through quality backlink acquisition, and technical quality maintaining low error rates. These signals organically increase crawl demand resulting in more allocated crawling without requiring manual requests. Additionally, submitting updated sitemaps immediately when important content changes signals Google that new content warrants crawling. Enterprise websites frustrated by insufficient crawl budget should focus optimisation effort on improving algorithmic signals rather than expecting manual intervention to meaningfully increase allocation because Google's automated systems determine virtually all crawl budget allocation regardless of manual requests.

What should enterprise websites do if crawl budget optimisation reveals that catalogues are too large to index completely regardless of optimisation quality?

Some enterprise websites legitimately have catalogue sizes exceeding what reasonable crawl budget allocation can fully index requiring strategic decisions about which pages deserve indexing priority versus acceptance that some pages may never index. When complete indexing is impractical despite optimisation, implement prioritisation strategies including ruthlessly blocking low-value URL patterns from discovery entirely, focusing sitemap inclusion only on pages with revenue or traffic potential, implementing strategic internal linking concentrating link equity on highest-value pages, potentially noindexing lowest-performing long-tail products or old content that provides minimal value, and considering whether catalogue size is artificially inflated through technical problems creating unnecessary URLs. Additionally, evaluate whether content consolidation could reduce total page count whilst maintaining coverage through combining thin pages into comprehensive resources that search engines prioritise. Some enterprises discover that 20% of their catalogue generates 80% of revenue suggesting focused indexing of that 20% provides majority of organic value whilst the remaining 80% might accept lower indexing priority. Calculate revenue per indexed page identifying which segments provide best return on indexing investment focusing crawl budget accordingly. Finally, consider that complete indexing may simply not be critical business objective if the highest-value pages are reliably indexed whilst long-tail low-traffic pages remain unindexed without material revenue impact. Focus optimisation effort where indexing delivers measurable business value rather than pursuing theoretical complete indexing that may not justify the substantial technical investment required.

How do enterprise websites balance crawl budget optimisation against providing comprehensive internal linking that users need for navigation?

Crawl budget optimisation and user experience occasionally conflict when comprehensive filtering and navigation that users value creates crawl waste requiring careful balancing rather than eliminating user features for SEO benefit. Implement user-friendly navigation whilst protecting crawl budget through technical barriers invisible to users including JavaScript-only filter rendering where filters function for users but don't create crawlable URL variations, form-based filtering using POST requests rather than GET parameters avoiding discoverable filter URLs, AJAX implementation updating content without changing URLs, canonical tags on filtered pages maintaining user access whilst consolidating crawl signals, and strategic robots.txt blocking preventing crawler discovery of filter patterns whilst maintaining user accessibility. Modern approaches enable full-featured filtering and navigation for users whilst presenting cleaner, more focused crawl interfaces to search engines. The key is recognising that optimal user experience and optimal crawl efficiency sometimes require different implementations using progressive enhancement or graceful degradation serving appropriate versions to different audiences. Enterprise websites should never sacrifice user experience for SEO but should invest in technical implementations that satisfy both requirements rather than accepting that one must compromise the other. Consult with Maven Marketing Co. for implementations balancing user functionality with crawl efficiency through modern technical approaches that previous-generation implementations couldn't achieve.

Enterprise Crawl Budget Optimisation Drives Complete Indexing

Crawl budget optimisation transforms enterprise SEO from accepting partial indexing coverage to systematically ensuring search engines discover and maintain complete catalogue indexes through strategic waste elimination, prioritised URL allocation, and technical efficiency improvements that maximise indexing within practical crawl constraints.

The optimisation frameworks outlined in this guide including server log analysis methodologies, systematic waste pattern identification, strategic URL prioritisation, technical infrastructure improvements, and ongoing monitoring provide comprehensive foundation for enterprise websites to achieve the complete indexing coverage that crawl budget constraints would otherwise prevent regardless of content quality and optimisation sophistication.

Enterprise websites working with Maven Marketing Co. benefit from professional crawl budget audits revealing precise waste patterns and optimisation opportunities, technical implementation expertise eliminating crawl inefficiencies, and ongoing monitoring maintaining optimised crawl distribution as catalogues evolve ensuring sustained complete indexing that drives organic traffic and revenue growth.

Ready to optimise crawl budget allocation ensuring search engines efficiently discover and index your complete enterprise catalogue? Maven Marketing Co. provides comprehensive crawl budget optimisation services including server log analysis, technical implementation, ongoing monitoring, and strategic URL prioritisation ensuring your large website achieves the complete indexing coverage that enterprise SEO success requires.